Yann Neuhaus

OTDS – Installation of Replicas fail if the OT Admin password is too long?

For simplicity, in this blog, I will refer to the first OTDS instance as the “Primary” (the synchronization master host, installed with ISREPLICA_TOPOLOGY=0=FALSE) and any additional instances as “Replicas” (installed with ISREPLICA_TOPOLOGY=1=TRUE). Over the past few years, I have installed and worked on around 20–30 different OTDS environments, some with a single instance and others with multiple instances (HA). Overall, it is not a bad piece of software, even though it could use improvements in certain areas (e.g.: c.f. this blog). However, it was only after I started installing a few Replicas on recent OTDS versions (using a database backend instead of OpenDJ) that I encountered a rather unusual issue.

1. OTDS Replica installation failureSingle-instance installations using the silent properties file were always successful, and most multi-instance installations worked as well. However, I encountered a very specific issue twice: the Primary instance would install successfully, but the Replica installation would fail with an error stating “parameter JDBC_CONNECTION_STRING not defined“. Since everything runs in automated environments (Kubernetes or Ansible), I knew it was not a human error. When comparing the silent properties files, everything looked correct. The file used on the Primary was exactly the same as the one used on the Replica, except for “ISREPLICA_TOPOLOGY=0” and “ENCRYPTION_KEY=” on the Primary versus “ISREPLICA_TOPOLOGY=1” and “ENCRYPTION_KEY=XXXXXXX” on the Replica.

This is the expected configuration. A Replica needs to take the value of “directory.bootstrap.CryptSecret” from the “otds.properties” file of the Primary and use that value for “ENCRYPTION_KEY“. Therefore, when you install the Primary instance, the value remains empty because nothing is installed yet. During the Replica installation, the automation retrieves this value and populates the parameter accordingly. But then why would the Primary installation succeed while the Replica fails when using the exact same silent properties file? Quite strange, right? First of all, I tried running the installer manually (outside of Kubernetes or Ansible) to see whether additional details would appear in the console:

[tomcat@otds-1 workspace_otds]$ /app/scripts/workspace_otds/otds/setup -qbi -rf /app/scripts/workspace_otds/otds/silent.properties

OpenText Directory Services 24.4.0

Error, parameter JDBC_CONNECTION_STRING not defined.

[tomcat@otds-1 workspace_otds]$

The generated log file was not really helpful either:

[tomcat@otds-1 workspace_otds]$ cat otds.log

...

2025-08-08 6:38:40 chmod ran successfully on /etc/opentext/unixsetup

2025-08-08 6:38:40 Setting environment variable "ACTION" to "-1" : Success

2025-08-08 6:38:40 Setting environment variable "UPGRADE" to "0" : Success

2025-08-08 6:38:40 Setting environment variable "PATCH" to "0" : Success

2025-08-08 6:38:40 Setting environment variable "INSTALLED" to "0" : Success

2025-08-08 6:38:40 Setting environment variable "INSTALLEDVERSION" to "0.0.0" : Success

2025-08-08 6:38:40 Setting environment variable "PRODUCTINSTANCE" to "1" : Success

2025-08-08 6:38:40 Setting environment variable "PRODUCTVERSION" to "24.4.0.4503" : Success

2025-08-08 6:38:40 Setting environment variable "PRODUCTNAME" to "OpenText Directory Services" : Success

2025-08-08 6:38:40 Setting environment variable "PRODUCTID" to "OTDS" : Success

2025-08-08 6:38:40 Setting environment variable "PATCHVERSION" to "0" : Success

2025-08-08 6:38:40 Setting environment variable "ROOTUSER" to "0" : Success

2025-08-08 6:38:40 Setting environment variable "Main_INSTALLED" to "-1" : Success

2025-08-08 6:38:40 Setting environment variable "INST_GROUP" to "tomcat" : Success

2025-08-08 6:38:40 Setting environment variable "INST_USER" to "tomcat" : Success

2025-08-08 6:38:40 Setting environment variable "INSTALL_DIR" to "/app/tomcat/app_data/otds" : Success

2025-08-08 6:38:40 Setting environment variable "TOMCAT_DIR" to "/app/tomcat" : Success

2025-08-08 6:38:40 Setting environment variable "PRIMARY_FQDN" to "otds-1.otds.otdsdev.svc.cluster.local" : Success

2025-08-08 6:38:40 Setting environment variable "ISREPLICA_TOPOLOGY" to "1" : Success

2025-08-08 6:38:40 Setting environment variable "IMPORT_DATA" to "0" : Success

2025-08-08 6:38:40 Setting environment variable "OTDS_PASS" to "*****" : Success

2025-08-08 6:38:40 Setting environment variable "ENCRYPTION_KEY" to "mqLgucZ8UIUnNcLwjwmhNw==" : Success

2025-08-08 6:38:40 Setting environment variable "MIGRATION_OPENDJ_URL" to "" : Success

2025-08-08 6:38:40 Setting environment variable "MIGRATION_OPENDJ_PASSWORD" to "*****" : Success

2025-08-08 6:38:40 Setting environment variable "JDBC_CONNECTION_STRING" to "" : Success

2025-08-08 6:38:40 Setting environment variable "JDBC_USERNAME" to "" : Success

2025-08-08 6:38:40 Setting environment variable "JDBC_PASSWORD" to "*****" : Success

2025-08-08 6:38:40 Setting environment variable "ACTION" to "3" : Success

2025-08-08 6:38:40 Setting environment variable "Main_ACTION" to "3" : Success

2025-08-08 6:38:40 Adding Pre-req "TOMCAT7_HIGHER"

...

2025-08-08 6:38:40 Action #1 ended: OK

2025-08-08 6:38:40 Setting environment variable "PRIMARY_FQDN" to "otds-1.otds.otdsdev.svc.cluster.local" : Success

2025-08-08 6:38:40 Setting environment variable "ISREPLICA_TOPOLOGY" to "1" : Success

2025-08-08 6:38:40 Skipping IMPORT_DATA parameter (condition is false)

2025-08-08 6:38:40 Skipping OTDS_PASS parameter (condition is false)

2025-08-08 6:38:40 Setting environment variable "ENCRYPTION_KEY" to "mqLgucZ8UIUnNcLwjwmhNw==" : Success

2025-08-08 6:38:40 Skipping MIGRATION_OPENDJ_URL parameter (condition is false)

2025-08-08 6:38:40 Skipping MIGRATION_OPENDJ_PASSWORD parameter (condition is false)

2025-08-08 6:38:40 Error, parameter JDBC_CONNECTION_STRING not defined.

2025-08-08 6:38:40 Setup Ended: 1

2025-08-08 6:38:40 ============= Verbose logging Ended =============

[tomcat@otds-1 workspace_otds]$

For reference, here is the content of the “silent.properties” file that this Replica installation uses:

[tomcat@otds-1 workspace_otds]$ cat otds/silent.properties

[Setup]

Id=OTDS

Version=24.4.0.4503

Patch=0

Basedir=/app/scripts/workspace_otds/otds

Configfile=/app/scripts/workspace_otds/otds/setup.xml

Action=Install

Log=/app/scripts/workspace_otds/otds/otds.log

Instance=1

Feature=All

[Property]

INST_GROUP=tomcat

INST_USER=tomcat

INSTALL_DIR=/app/tomcat/app_data/otds

TOMCAT_DIR=/app/tomcat

PRIMARY_FQDN=otds-1.otds.otdsdev.svc.cluster.local

ISREPLICA_TOPOLOGY=1

IMPORT_DATA=0

OTDS_PASS=m1z6GX+HEX81DRpC

ENCRYPTION_KEY=mqLgucZ8UIUnNcLwjwmhNw==

MIGRATION_OPENDJ_URL=

MIGRATION_OPENDJ_PASSWORD=

JDBC_CONNECTION_STRING=jdbc:oracle:thin:@(DESCRIPTION=(ADDRESS_LIST=(ADDRESS=(PROTOCOL=TCP)(HOST=db_host.domain.com)(PORT=1521)))(CONNECT_DATA=(SERVICE_NAME=db_svc.domain.com)))

JDBC_USERNAME=OTDS

JDBC_PASSWORD=Shu#Asd#Tgb;6799

[tomcat@otds-1 workspace_otds]$

(These are the real passwords from that environment. I have changed them since then, obviously, but I included them so you can understand the details below. The encryption key is altered, though – the system originally took the real one from the “Primary” instance.)

3. Status of the installer created/managed filesAfter the failure, I checked the parameter file that the OTDS installer populates during installation, but it was mostly empty and not yet filled:

[tomcat@otds-1 workspace_otds]$ cat /etc/opentext/unixsetup/OTDS_parameters_1.txt

#GROUP name that should be used to change file group ownership (group of USER)

INST_GROUP=tomcat

#USER name that should be used to change file ownership (user running processes)

INST_USER=tomcat

#Specify the installation directory for OpenText Directory Services

INSTALL_DIR=/usr/local/OTDS

#Specify the directory, where (64-bit) Apache Tomcat 10 or higher is installed

TOMCAT_DIR=/app/tomcat

#This hostname is used by other instances to connect to the synchronization master host.

PRIMARY_FQDN=

#Is this server a supplementary instance to an existing environment?

ISREPLICA_TOPOLOGY=0

#Specify OpenDJ connection for import.

IMPORT_DATA=0

#Specify the data encryption key from an existing instance.

ENCRYPTION_KEY=

#OpenDJ LDAP URL (example: ldap://localhost:1389)

MIGRATION_OPENDJ_URL=

#Specify JDBC connection String (example: jdbc:postgresql://localhost:5432/postgres). NOTE: Enter these values carefully since they cannot be validated here. Refer to the OTDS installation and administration guide for JDBC URL samples for supported databases.

JDBC_CONNECTION_STRING=

#Specify Database User Name

JDBC_USERNAME=

[tomcat@otds-1 workspace_otds]$

Finally, the “otds.properties” file (normally generated during the installation) was also not present yet:

[tomcat@otds-1 workspace_otds]$ ls -l $APP_DATA/otds/config/otds.properties

ls: cannot access '/app/tomcat/app_data/otds/config/otds.properties': No such file or directory

[tomcat@otds-1 workspace_otds]$

I tried launching the installer multiple times, on that OTDS Replica, while making small changes to the silent properties file to see if something specific would cause it to fail. Starting by modifying the “JDBC_CONNECTION_STRING” parameter, since that is what the installer complained about, but without success. I then suspected the password parameter. Because passwords are masked in the logs (“*“), it is impossible to see whether the value is parsed correctly or not.

Therefore, I replaced the OTDS Admin password in the silent properties file with “dummyPassword“, and the installer suddenly proceeded further… I cancelled the installation because this was not the real password of the “otadmin” account on the Primary instance, but in this case the “JDBC_CONNECTION_STRING” parameter was no longer empty and the installer continued normally.

Note: the OTDS documentation specifies that passwords must contain at least eight characters, including one lowercase letter, one uppercase letter, one number, and one special character. However, it appears that this rule may not be strictly validated during Replica installations (and possibly not for the Primary either?).

At that point it became clear that the password itself was involved in the issue, somehow. Looking at the script “tools/setup.sh“, you can see that the installer extracts the value of “OTDS_PASS“, applies a function called “AsctoHex“, and then encrypts it. My original “otadmin” password was 16 characters long and satisfied all complexity requirements. However, I noticed that the password contained the string “HEX“. Since the installer converts the password to hexadecimal before encryption, I wondered whether the presence of the string “HEX” might interfere with this process. That would be quite unbelievable, right?

5. A problem with the password length or content?To test this idea, I removed the “E” in the middle, transforming “HEX” into “HX” and effectively reducing the password length by one character:

[tomcat@otds-1 workspace_otds]$ grep OTDS_PASS otds/silent.properties | awk -F= '{print $2}' | wc -c

17

[tomcat@otds-1 workspace_otds]$ # 17 means 16 char since wc -c count the new line in this command

[tomcat@otds-1 workspace_otds]$

[tomcat@otds-1 workspace_otds]$ sed -i 's,HEX,HX,' otds/silent.properties

[tomcat@otds-1 workspace_otds]$

[tomcat@otds-1 workspace_otds]$ grep OTDS_PASS otds/silent.properties | awk -F= '{print $2}' | wc -c

16

[tomcat@otds-1 workspace_otds]$ # 16 means 15 char now

To re-execute the installer after a failure, you must remove the content of the “/etc/opentext” directory (which kind of caches the content from the “silent.properties” file) and also delete the “otds.properties” file if it exists (not in my case):

[tomcat@otds-1 workspace_otds]$ rm -rf /etc/opentext/*

[tomcat@otds-1 workspace_otds]$

In addition to modifying the “silent.properties” file, I also changed the “otadmin” password through the OTDS otds-admin UI (see the OTDS Install & Admin Guide, section 7.2.5 “Resetting a user password”). Then I started a new Replica installation to see whether changing “HEX” to “HX” from the password would resolve the issue:

[tomcat@otds-1 workspace_otds]$ /app/scripts/workspace_otds/otds/setup -qbi -rf /app/scripts/workspace_otds/otds/silent.properties

OpenText Directory Services 24.4.0

------------------------------------------------------------------------------

OpenText Directory Services

------------------------------------------------------------------------------

Installing OpenText Directory Services Component

Please wait .

Installation of OpenText Directory Services Component OK

Installation completed. Results:

OpenText Directory Services Component OK

Installation finished.

[tomcat@otds-1 workspace_otds]$

… It worked …?

If the issue was really caused by the presence of “HEX” in the password, then replacing it with “HXE” should also work, right? Unfortunately, when I tried that, the issue came back… This indicates that the real problem is not the literal “HEX” string but maybe something related to password length, complexity, or how the installer processes and encrypts the password internally?

6. ConclusionIn the end, I reverted to the shorter 15-character password that worked and prepared all higher environments at this customer to use 15-character passwords. This approach worked without issue for five additional environments until, of course, it failed again, in Production…

Since it failed in another environment even with a 15-character password, the length alone does not seem to be the root cause. When reviewing previously installed environments across multiple customers, I found a few instances running with “otadmin” passwords of up to 19 characters long (about 111/120 bits of entropy according to a password manager like KeePass). This is significantly stronger than the 15-character password (96 bits) used in the Production environment where the issue occurred.

Therefore, since I couldn’t find any logical reasons why the issue happened on some environments but not with others, I opened a ticket with OpenText. I described everything and we went through several weeks of exchanges to try to find an explanation but without success. As of today, I still don’t know why ~10% of the OTDS Replicas that I installed faced an issue with the OT Admin password, but the fix, was simply to change the password in the UI and start the silent installation again. I don’t have an environment to test / debug that issue anymore, since it’s not easily reproducible. Guess I will need to wait for next time to get more debug logs from the OTDS installer (“-debug” option). In the meantime, I can only assume something is probably wrong in the way OTDS manages the password or its hash.

L’article OTDS – Installation of Replicas fail if the OT Admin password is too long? est apparu en premier sur dbi Blog.

Protected: Upgrade RHEL from 9.6 to 10.1 (when running PostgreSQL/Patroni)

This content is password-protected. To view it, please enter the password below.

Password:

L’article Protected: Upgrade RHEL from 9.6 to 10.1 (when running PostgreSQL/Patroni) est apparu en premier sur dbi Blog.

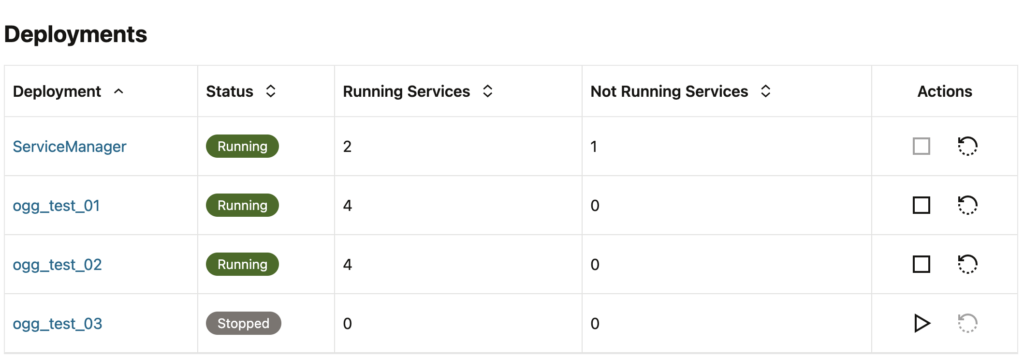

Deployment Creation INS-85037 Error With GoldenGate 26ai for DB2 z/OS

Among all the automation I was doing around a GoldenGate installation for DB2, I recently ended up with an INS-85037 error when running the configuration assistant oggca.sh. And because this error is quite common and has many different possible root causes, I wanted to write about it.

If you’re wondering how to set up GoldenGate 26ai for DB2 z/OS, it is very similar to what you would do with GoldenGate for Oracle. For more information on standard GoldenGate setups, you can read my blog posts about both 26ai and 23ai installations.

For the binary installation, the main difference is that INSTALL_OPTION should be set to DB2ZOS. A complete oggcore.rsp response file would look like this:

oracle.install.responseFileVersion=/oracle/install/rspfmt_ogginstall_response_schema_v23_1_0

INSTALL_OPTION=DB2ZOS

SOFTWARE_LOCATION=/u01/app/oracle/product/oggzos

INVENTORY_LOCATION=/u01/app/oraInventory

UNIX_GROUP_NAME=oinstallWhen running the configuration assistant, some options are not available, but the main difference is in the environment variables section of the response file. You should have an IBMCLIDRIVER variable set to your DB2 driver’s path.

ENV_LD_LIBRARY_PATH=${IBMCLIDRIVER}/1ib:${OGG_HOME}/1ib

IBMCLIDRIVER=/path/to/ibmclidriver

ENV_USER_VARS=That being said, here is the exact error I had when running the Configuration Assistant oggca.sh:

[FATAL] [INS-85037] Deployment creation failed.

ACTION: Check logs at /u01/app/oraInventory/logs/OGGCAConfigActions2026-03-22_15-19-15PM for more information.

*MORE DETAILS*

Return code 503 (Service Unavailable) does not match the expected code 201 (Created).

Verification failed for REST call to 'http://127.0.0.1:7810/services/v2/authorizations/security/ogguser'

Results for "Add a new deployment":

..."Verifying Service Manager deployment status.": SUCCEEDED

..."Adding 'zos_test' deployment.": SUCCEEDED

...."Configuring and starting the Administration Service.": SUCCEEDED

..."Verifying the initial Administration Service configuration.": SUCCEEDED

..."Adding user 'ogguser' to administer the deployment.": FAILED

Log of this session available at: /u01/app/oraInventory/logs/OGGCAConfigActions2026-03-22_15-19-15PM

The deployment creation failed and the associated files will be deleted from disk. Oracle recommends that if you want to keep the log files, you should move them to another location.

Log files will be copied to:

/u01/app/oraInventory/logs/OGGCAConfigActions2026-03-22_15-19-15PM/userdeploy_logs_2026-03-22_15-19-15PM

[WARNING] [INS-32090] Software installation was unsuccessful.

ACTION: Refer to the log files for details or contact Oracle Support Services.Unfortunately, the installation logs did not show anything other than the following:

SEVERE: Deployment creation job failed.

INFO: Service Manager deployment that was created as part of the process needs to be removed.

INFO: Running clean-up job for Service Manager.

SEVERE: Removing Service Manager deployment.The deployment and the service manager get deleted after the installation failure, but the logs are also copied to the oraInventory installation logs. Looking at the ServiceManager.log in the smdeploy folder, we don’t get much information.

ERROR| Configuration does not contain a 'config/network' specification. (ServiceManager.Topology)The same applies to the restapi.log, where the logs start after the initial deployment creation error. Unfortunately, none of this was really helpful in my case. After quite some digging, I found that the response file I was using when running oggca.sh had an error. In the custom section for environment variables, I had the following settings:

# SECTION G - ENVIRONMENT VARIABLES

ENY_LD_LIBRARY_PATH-S{IBMCLIDRIVER}/1ib:${OGG_HOME}/11b

IBMCLIDRIVER=/u01/app/ibm/db2_odbc_cli_11_5

ENV_USER_VARS=It looks like what I gave earlier, except that the path for the clidriver was incomplete.

oracle@vmogg:/home/oracle/ [ogg] ls -l /u01/app/ibm/db2_odbc_cli_11_5

drwxr-xr-x 3 oracle oinstall 23 Mar 22 2026 odbc_cli

oracle@vmogg:/home/oracle/ [ogg] ls -l /u01/app/ibm/db2_odbc_cli_11_5/odbc_cli/clidriver/

-r-xr-xr-x 1 oracle oinstall 4170 Mar 17 2021 Readme.txt

drwxr-xr-x 2 oracle oinstall 36 Mar 22 2026 adm

drwxr-xr-x 2 oracle oinstall 122 Mar 22 2026 bin

drwxr-xr-x 2 oracle oinstall 197 Mar 22 2026 bnd

drwxr-xr-x 2 oracle oinstall 157 Mar 22 09:16 cfg

drwxr-xr-x 2 oracle oinstall 24 Mar 22 2026 cfecache

drwxr-xr-x 4 oracle oinstall 27 Mar 22 2026 conv

drwxr-xr-x 3 oracle oinstall 49 Mar 22 09:26 db2dump

drwxr-xr-x 3 oracle oinstall 217 Mar 22 2026 lib

drwxr-xr-x 3 oracle oinstall 124 Mar 22 09:26 license

drwxr-xr-x 3 oracle oinstall 28 Mar 22 2026 msg

drwxr-xr-x 3 oracle oinstall 21 Mar 22 2026 properties

drwxr-xr-x 3 oracle oinstall 20 Mar 22 2026 security32

drwxr-xr-x 3 oracle oinstall 20 Mar 22 2026 security64After correcting the oggca.rsp response file with the correct path, the configuration assistant ran successfully.

oracle@vmogg:/u01/app/oracle/product/ogg26/bin [ogg] oggca.sh -silent -responseFile /home/oracle/oggca.rsp

Successfully Setup Software.Next time you encounter an error like this when setting up GoldenGate for DB2, make sure to check not only the variable value but also the actual content of the IBMCLIDRIVER directory !

NB: If you had this error for any other kind of setup, make sure to always check all the content of the response file you are using, as well as the prerequisites. (CLIDRIVER in this case, but it could be XAG, etc.)

L’article Deployment Creation INS-85037 Error With GoldenGate 26ai for DB2 z/OS est apparu en premier sur dbi Blog.

Discover refreshable clone PDB with Autoupgrade

AutoUpgrade with a refreshable clone is basically “zero‑panic upgrades with a live copy of your database.”.

What problem it solvesTraditionally you had to schedule a maintenance window, stop everything, take a backup, upgrade, and hope nothing went wrong.

With a refreshable clone PDB, AutoUpgrade builds and continuously syncs a copy of your database while production stays online. At cutover time, you just stop users, do a last refresh, convert/upgrade the clone, and switch them over. If something goes wrong, the original source is untouched and you can fall back quickly.

Core idea in simple termsThink of your non‑CDB or old‑version PDB as the “master” and the refreshable clone PDB as a “follow‑me” copy sitting in the target CDB.

AutoUpgrade:

- Creates a PDB in the target CDB via database link (initial clone of datafiles).

- Marks it as refreshable, so redo from the source is applied and it keeps rolling forward.

- Lets you test the clone (read‑only) while users are still working on the source.

- At a controlled start time, runs a last refresh, disconnects it from the source, converts it to a normal PDB, and upgrades it.

From your point of view: you prepare everything days in advance, and the real downtime shrinks to “final refresh + upgrade + app switch.”

High‑level lifecycleFor a non‑CDB to PDB migration or a PDB upgrade, the flow looks like this:

Preparation- You have a source: non‑CDB 12.2/19c or older PDB.

- You have a target: a higher‑version CDB (for example 23ai/26ai) with enough space and network.

- You configure AutoUpgrade with the source and target, plus the parameter telling it to use refreshable clone PDB.

- In deploy mode, AutoUpgrade creates the pluggable database in the target CDB via DB link, copies the datafiles, and defines it as refreshable.

- From now on, redo is shipped from source to target and applied, so the clone stays close to current.

- The source database stays fully online; business keeps running.

- The refreshable clone is read‑only, so you can query it, run app smoke tests, check performance characteristics, etc.

- AutoUpgrade keeps the job running in the background, doing periodic refreshes.

- When you reach the maintenance window, users leave the system and you quiesce activity on the source.

- AutoUpgrade performs a final refresh: last redo from source is applied on the clone so you don’t lose any committed data.

- The clone is then disconnected from the source, turned into a regular PDB, and AutoUpgrade moves into the upgrade and conversion steps (non‑CDB to PDB conversion if needed, then catalog/PSU/UTLRP, etc.).

- You point applications to the new PDB in the target CDB.

- The original source database still exists; if you hit a show‑stopper, you can redirect apps back to it and plan a new attempt.

In practice, the “scary” part is only the final refresh and the moment you switch your apps.

Why DBAs like this patternSome clear advantages:

- Minimal downtime: Most of the heavy lifting (copy + sync) happens while production is running; downtime is limited to final refresh and upgrade.

- Built‑in rollback: Because the source stays untouched, you always have a clean fallback without restore/recovery.

- Realistic testing: You test against a clone built from real production data that is almost up‑to‑date, not a weeks‑old backup.

- Automation: AutoUpgrade orchestrates the create‑clone, refresh, disconnect, convert, and upgrade steps; you mostly steer with parameters and commands instead of custom scripts.

Trade‑offs are mainly around resources: you need disk, CPU, and network to maintain the refreshable clone, and you have to ensure redo shipping is reliable (archivelog gaps or network glitches can break the refresh and need fixing).

Typical exampleImagine you need to move a 19c non‑CDB to a new 26ai CDB on a different host, with less than 30 minutes downtime:

- Monday: you configure AutoUpgrade with the refreshable clone option, start the job. The tool creates the PDB clone in the 26ai CDB and starts streaming redo. Users never notice.

- Next days: you let it refresh every few minutes, developers connect read‑only to the clone and test their application against 26ai. Everything looks good.

- Saturday night: you enter the maintenance window, let open transactions finish, stop app traffic, and tell AutoUpgrade to proceed to the final refresh. Once that’s done, it disconnects the clone, upgrades it, and runs post‑upgrade steps.

- After checks, you change the service names on the app side so they point to the new PDB. Your downtime is mostly spent waiting for the upgrade scripts, not copying terabytes of data.

L’article Discover refreshable clone PDB with Autoupgrade est apparu en premier sur dbi Blog.

How to Standardize SQL Server Disks on VMs using Ansible

Today, the benefits of automation no longer need much explanation: saving time, reducing human error, and ensuring every environment remains aligned with internal standards. What is less obvious, however, is how using an Ansible Playbook can provide advantages that more traditional scripting approaches — such as large PowerShell scripts — struggle to offer. That is exactly what I want to explore here.

When you complete an automated deployment of a SQL Server environment on Windows Server, there is a real sense of achievement. You have invested time and effort, and you expect that investment to pay off thanks to the reliability and repeatability of automation.

But everything changes when the next Windows Server upgrade or SQL Server version arrives… or when corporate standards evolve. Suddenly, you need to reopen a multi-thousand‑line PowerShell script and:

- Integrate the required changes while keeping execution stable,

- Avoid subtle but potentially critical regressions,

- Maintain clear and usable logging,

- Retest the entire automation workflow,

- Troubleshoot new issues introduced by the modifications.

This is precisely the type of situation where Ansible becomes a far better long‑term investment. Its architecture and philosophy offer several advantages:

- Native idempotence, ensuring the same result even after multiple runs,

- A declarative YAML approach, focusing on the desired end state rather than the execution steps,

- Windows Server and SQL Server modules, providing built‑in idempotence and saving significant time,

- Agentless connectivity, simplifying deployment on new machines,

- A modular structure (roles, modules, variables), making adaptation and reuse of your automation much easier.

In this article, I will give you a concrete overview by walking you through how to configure the disks required for SQL Server using Ansible.

1-Map iSCSI controllers to disk numbersWhen developing an Ansible Playbook, one fundamental principle is to design for idempotence from the very start—not just rely on idempotent modules.

On Windows, disk numbering is not guaranteed: it depends on several factors – how disks are detected at startup, the firmware, and so on.

As a result, disk numbers may change from one reboot to another.

To ensure consistent and reliable execution of your deployment, this behavior must be accounted for directly in the design of your Playbook.

Otherwise, it may introduce wrong behaviors, and lead to:

- formatting the wrong disk,

- mounting volumes on incorrect devices,

- completely breaking the SQL Server provisioning workflow.

In other words, idempotence is no longer guaranteed.

To ensure stable and predictable executions, you must determine dynamically the correct disk numbering at each execution.

You can use Get-Disk PowerShell command to achieve your goal, by searching iSCSI controller number and LUN position from Location property.

$adapter = {{ disk.adapter }}

$lun = {{ disk.lun}}

(Get-Disk | Where-Object {

$_.Location -match "Adapter $adapter\s+:.*\s+LUN $lun"

}).number

We have done our mapping between VM specifications and Windows disk numbers.

2-Loop SQL Server disksSince we often have several disks to configure — Data, Logs, TempDB — we need to perform the same actions repeatedly on each disk:

- dynamically determine the disk number,

- initialize it in GPT,

- create the partition and format the volume in NTFS with a 64 KB allocation unit size,

- assign an access path (drive letter or mountpoint),

- apply certain specific configuration settings, such as disabling indexing,

- verify the compliance of the disk configuration.

As these actions are identical for all disks, the best approach is to factorize the tasks.

The Ansible pattern, for such scenario, is to loop that call in a dedicated Task File.

---

- name: Manage all disk properties based on Location and Target numbers

ansible.builtin.include_tasks: disks_properties.yml

loop:

- name: data

location: "{{ disk_specs.data.location }}"

target: "{{ disk_specs.data.target }}"

label: "{{ disk_specs.data.label }}"

letter: "{{ disk_specs.data.letter }}"

- name: logs

location: "{{ disk_specs.logs.location }}"

target: "{{ disk_specs.logs.target }}"

label: "{{ disk_specs.logs.label }}"

letter: "{{ disk_specs.logs.letter }}"

- name: tempdb

location: "{{ disk_specs.tempdb.location }}"

target: "{{ disk_specs.tempdb.target }}"

label: "{{ disk_specs.tempdb.label }}"

letter: "{{ disk_specs.tempdb.letter }}"

loop_control:

loop_var: disk

...

Since we performed our loop in the previous section on the disks_properties.yml file, we can now implement the configuration actions inside this file.

First, we will retrieve the disk number and then begin configuring the disk according to best practices and our internal standards.

To guarantee idempotence, we will mark this step as not changed: this is only a Get action:

---

- name: Identify the {{ disk.name }} disk number

ansible.windows.win_shell: |

$adapter = {{ disk.target }}

$lun = {{ disk.location }}

(Get-Disk | Where-Object {

$_.Location -match "Adapter $adapter\s+:.*\s+LUN $lun"

}).number

register: disk_num

changed_when: false

Then, we will register the disk number as an Ansible Fact for all this task file execution call.

- name: Set fact for {{ disk.name }} disk number

ansible.builtin.set_fact:

"disk_number_{{ disk.name }}": "{{ disk_num.stdout | trim | int }}"

We can now initialize the disk using community.windows module. Of course, use Ansible module if possible.

The parameter disk_bps.partition_style is a variable of my Ansible Role, to guarantee GPT will be used.

- name: Initialize disks

community.windows.win_initialize_disk:

disk_number: "{{ lookup('vars', 'disk_number_' + disk.name) }}"

style: "{{ disk_bps.partition_style }}"

From there, we can create our partition:

- name: Create partition with letter {{ disk.letter }} for disk {{ disk.name }}

community.windows.win_partition:

drive_letter: "{{ disk.letter }}"

partition_size: "-1"

disk_number: "{{ lookup('vars', 'disk_number_' + disk.name) }}"

And now format our volume with allocation unit size 64KB:

- name: Create a partition letter {{ disk.letter }} on disk {{ disk.name }} with label {{ disk.label }}

community.windows.win_format:

drive_letter: "{{ disk.letter }}"

allocation_unit_size: "{{ disk_bps.allocation_unit_size_bytes }}"

new_label: "{{ disk.label }}"

...

As I mentioned earlier in previous section, we can also add tasks relative to some specific standards or a tasks to guarantee disk compliance.

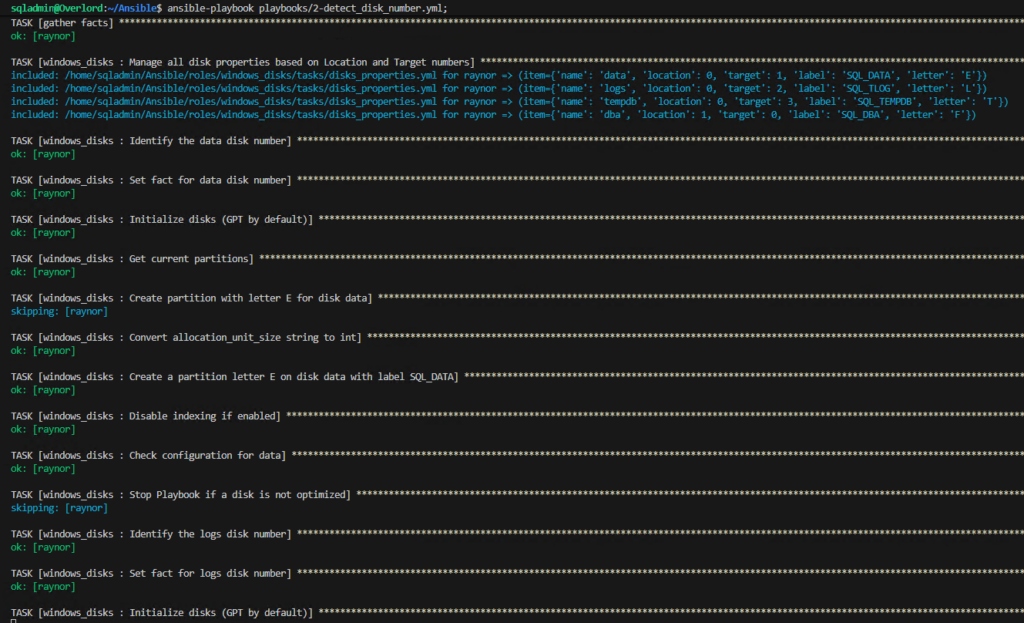

4- Execute the PlaybookNow that our Ansible Role windows_disks is ready, we can call it through a Playbook.

Of course, we must adjust the reality of the iSCSI configuration of the Virtual Machine.

---

- name: Configure Disks by detecting Disk Number

hosts: Raynor

gather_facts: false

vars:

disk_specs:

data:

location: 0

target: 1

label: SQL_DATA

letter: E

logs:

location: 0

target: 2

label: SQL_TLOG

letter: L

tempdb:

location: 0

target: 3

label: SQL_TEMPDB

letter: T

tasks:

- name: gather facts

ansible.builtin.setup:

changed_when: false

tags: [always]

- name: Configure Disks

ansible.builtin.import_role:

name: windows_disks

tags: windows_disks

...

CONCLUSION

CONCLUSION

We have had an overview of how Ansible makes automation easier to maintain and to evolve, by focusing on the logic of our deployment and not on the code to achieve it.

Now, updating your standards or upgrading versions will no longer require rewriting scripts, but mainly adapting variables.

However, it is important to be aware that idempotence must also be maintained through design.

L’article How to Standardize SQL Server Disks on VMs using Ansible est apparu en premier sur dbi Blog.

Creating Path Connections with GoldenGate REST API

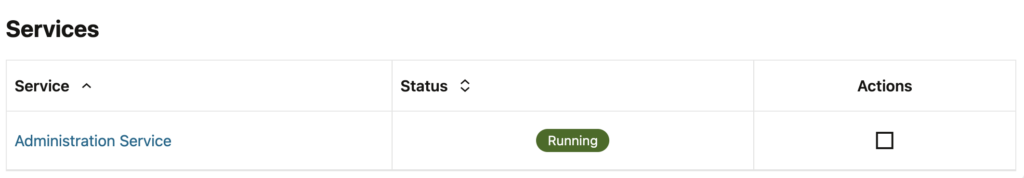

When automating your GoldenGate deployment management, you might want to create path connections with the GoldenGate REST API. This is an important aspect when connecting GoldenGate deployments with distribution paths. A first step towards this is to create a path connection on the same deployment as the distribution server where the distribution path will run.

In the GoldenGate web UI, you can easily create Path Connections. Just go to the Path Connections tab, add a path, and specify the following information:

- Credential Alias: Alias used to connect to the target deployment. It doesn’t have to match any name on the target deployment.

- User ID: Real username that must exist on the target deployment.

- Password: Password associated with the User ID given before.

restapi.log analysis

But what about the REST API ? When looking at the list of endpoints given by Oracle, no REST endpoint explicitly refers to path connections, so how to create path connections through the REST API ?

The key point to understand is that path connections are not independent GoldenGate objects. In fact, they exist as a subset of another object, which you should know by now : aliases. Aliases are created to store credentials and are organized in domains. The default domain is called OracleGoldenGate, and Oracle has a reserved name for a subtype of domains : Network.

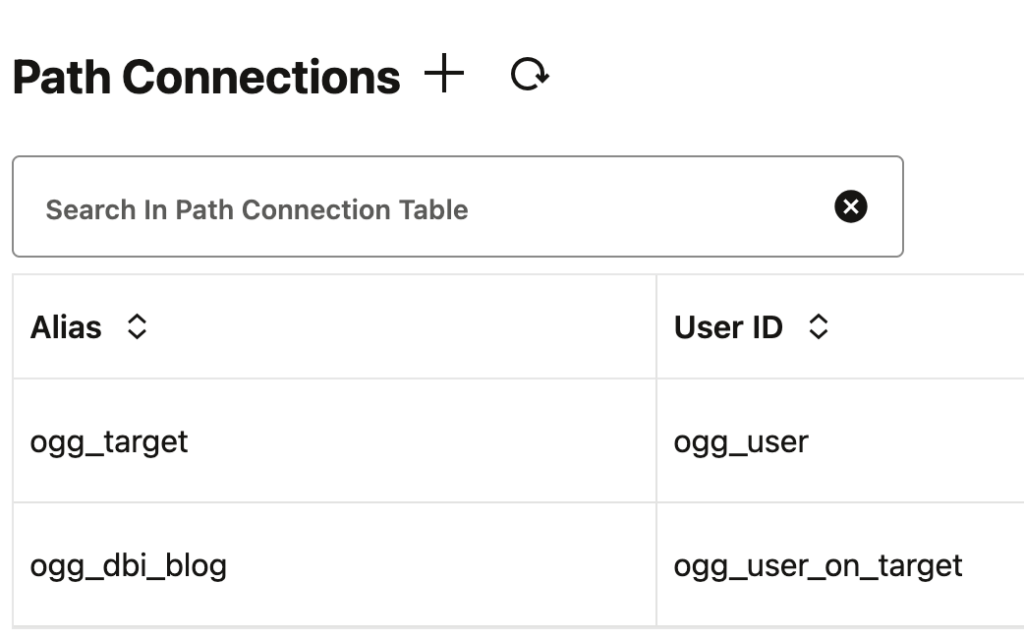

We can see this easily when creating a path connection through the web UI, and then looking at the restapi.log file. Open the log file located in the var/log folder of your deployment, or read the blog I wrote about restapi.log analysis. Using this method, we see the endpoint and the content of the API call. Here, for instance, I created a path connection from the web UI, to connect to ogg_user with the ogg_target alias.

oracle@vmogg: jq -c 'select (.request.context.verb == "POST" and .request.context.uriTemplate == "/services/{version}/credentials/{domain}/{alias}")' restapi.ndjson

{"request":{"context":{"verb":"POST","uri":"/services/v2/credentials/Network/ogg_target","uriTemplate":"/services/{version}/credentials/{domain}/{alias}"}},"content":{"userid":"ogg_user","password":"** Masked **"},...}To summarize, path connections are just aliases in the Network domain. This simplifies the creation of path connections. You just need to make a POST API call to the alias endpoint, specifying Network as the domain. The exact endpoint is then /services/{version}/credentials/Network/{alias}.

Quick example: using the GoldenGate Python client I presented in another blog, let’s create an alias in the Network domain :

>>> from oggrestapi import OGGRestAPI

>>> ogg_client = OGGRestAPI(

url="https://vmogg",

username="ogg",

password="ogg")

Connected to OGG REST API at https://vmogg

>>> ogg_client.create_alias(

alias='ogg_dbi_blog',

domain='Network',

data={

"userid": "ogg_user_on_target",

"password": "***"

}

)

{'$schema': 'api:standardResponse', 'links': [{'rel': 'canonical', 'href': 'https://vmogg/services/v2/credentials/Network/ogg_dbi_blog', 'mediaType': 'application/json'}, {'rel': 'self', 'href': 'https://vmogg/services/v2/credentials/Network/ogg_dbi_blog', 'mediaType': 'application/json'}], 'messages': [{'$schema': 'ogg:message', 'title': 'Credential store altered.', 'code': 'OGG-15114', 'severity': 'INFO', 'issued': '2026-03-22T10:14:01Z', 'type': 'https://docs.oracle.com/en/middleware/goldengate/core/23.26/error-messages/'}]}After refreshing the web UI, the newly created path connection is visible.

L’article Creating Path Connections with GoldenGate REST API est apparu en premier sur dbi Blog.

Dctm – Another DM_LICENSE_E_INVALID_LICENSE error but caused by JMS this time

At the end of last year, I published a first blog about a DM_LICENSE_E_INVALID_LICENSE error in D2 SSO login through OTDS. The root cause in that previous post was a duplicate user with one lowercase and one uppercase user_login_name. However, I did mention that there can be several reasons for that error. In this blog, I will describe another such case.

1. Symptoms in D2 logsThe generated D2 logs associated with this new issue are almost exactly the same. The only difference is that the Repository returns “null” as the userid (user_name). See the message “Authentication failed for user null with docbase REPO_NAME“. This wasn’t the case in the other blog post:

[tomcat@d2-0 logs]$ cat D2.log

...

2025-12-08 12:21:14,784 UTC [INFO ] (https-jsse-nio-8080-exec-47) - c.emc.x3.portal.server.X3HttpSessionListener : Created http session 8531D373A3EA12A398B158AF656E7D20

2025-12-08 12:21:14,784 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : OTDS : No user name on the Http session yet

2025-12-08 12:21:14,785 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : OTDS : No access_token found in Http request or Cookie Redirecting to OTDS Server

2025-12-08 12:21:14,786 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Identified scheme : https

2025-12-08 12:21:14,786 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Identified server name : d2.domain.com

2025-12-08 12:21:14,787 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Identified server port : 443

2025-12-08 12:21:14,787 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Built server host is : https://d2.domain.com:443

2025-12-08 12:21:14,788 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - o.o.e.logging.slf4j.Slf4JLogLevelHandlers$4 : [EVENT SUCCESS -> /D2/HTTPUtilities] header name=Host, value=d2.domain.com

2025-12-08 12:21:14,789 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - o.o.e.logging.slf4j.Slf4JLogLevelHandlers$4 : [EVENT SUCCESS -> /D2/HTTPUtilities] MaxHeaderValueSize: 8192

2025-12-08 12:21:14,792 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - o.o.e.logging.slf4j.Slf4JLogLevelHandlers$4 : [EVENT SUCCESS -> /D2/HTTPUtilities] validating the input valued2.domain.com

2025-12-08 12:21:14,793 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Identified host : d2.domain.com

2025-12-08 12:21:14,794 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Overall base URL built : https://d2.domain.com/D2

2025-12-08 12:21:14,795 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : Redirection url post encoding - https%3A%2F%2Fd2.domain.com%2FD2%2Fd2_otds.html%3ForigUrl%3D%2FD2%2F

2025-12-08 12:21:14,797 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : OTDS : OAUTH final login sendRedirect URL : https://otds-mfa.domain.com/otdsws/oauth2/auth?response_type=token&client_id=dctm-ns-d2&redirect_uri=https%3A%2F%2Fd2.domain.com%2FD2%2Fd2_otds.html%3ForigUrl%3D%2FD2%2F&logon_appname=Documentum+Client+CE+23.4

2025-12-08 12:21:14,798 UTC [DEBUG] (https-jsse-nio-8080-exec-47) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : OTDS : Sending redirection as it's not a rpc call : https://otds-mfa.domain.com/otdsws/oauth2/auth?response_type=token&client_id=dctm-ns-d2&redirect_uri=https%3A%2F%2Fd2.domain.com%2FD2%2Fd2_otds.html%3ForigUrl%3D%2FD2%2F&logon_appname=Documentum+Client+CE+23.4

2025-12-08 12:21:15,018 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - o.o.e.logging.slf4j.Slf4JLogLevelHandlers$4 : [EVENT SUCCESS -> /D2/HTTPUtilities] MaxHeaderKeySize: 256

2025-12-08 12:21:15,018 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - o.o.e.logging.slf4j.Slf4JLogLevelHandlers$4 : [EVENT SUCCESS -> /D2/HTTPUtilities] MaxHeaderValueSize: 8192

2025-12-08 12:21:15,020 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : OTDS : No user name on the Http session yet

2025-12-08 12:21:15,021 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : OTDS : Found access_token on Http Cookie, invalidating the cookie by setting maxAge 0

2025-12-08 12:21:15,022 UTC [INFO ] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : OTDS : setting the cookie as secure as its a https request

2025-12-08 12:21:15,024 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : OTDS : OTDS responded with a oauth token

2025-12-08 12:21:15,025 UTC [INFO ] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : ------ Begin getUntrustedJwtHeader : eyJraWQiOiI1YjM4...oSD8Xh3vVmkekcA

2025-12-08 12:21:15,026 UTC [INFO ] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : getUntrustedJwtHeader oauthTokenWithoutSignature : eyJraWQiOiI1YjM4...i1xYWN0LWQyIn0.

2025-12-08 12:21:15,614 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : ------ Begin validateOTDSTokenClaims : MYUSERID

2025-12-08 12:21:15,615 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : validateOTDSTokenClaims for user : MYUSERID , OTDS : currenttime: 1765196475615 expirationtime: 1765200074000

2025-12-08 12:21:15,615 UTC [INFO ] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : ------ End validateOTDSTokenClaims : MYUSERID

2025-12-08 12:21:15,615 UTC [INFO ] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : PublicKey for Key id : 5b38b...bf487 exists

2025-12-08 12:21:15,617 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : OTDS Deafault Repository from shiro configured : REPO_NAME

2025-12-08 12:21:15,617 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : OTDS : generating DM_Ticket for user : MYUSERID in Repository : REPO_NAME

2025-12-08 12:21:16,522 UTC [ERROR] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : OTDS : OAuth Token Error occurred while generating a DCTM MultiUse Ticket for user : MYUSERID

2025-12-08 12:21:16,522 UTC [ERROR] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : OTDS : OAuth Token Error please validate the OTDS Config of user exists in Repository

com.documentum.fc.client.DfAuthenticationException: [DM_SESSION_E_AUTH_FAIL]error: "Authentication failed for user null with docbase REPO_NAME."

at com.documentum.fc.client.impl.docbase.DocbaseExceptionMapper.newException(DocbaseExceptionMapper.java:52)

at com.documentum.fc.client.impl.connection.docbase.MessageEntry.getException(MessageEntry.java:39)

at com.documentum.fc.client.impl.connection.docbase.DocbaseMessageManager.getException(DocbaseMessageManager.java:137)

at com.documentum.fc.client.impl.connection.docbase.netwise.NetwiseDocbaseRpcClient.checkForMessages(NetwiseDocbaseRpcClient.java:332)

at com.documentum.fc.client.impl.connection.docbase.netwise.NetwiseDocbaseRpcClient.applyForObject(NetwiseDocbaseRpcClient.java:680)

at com.documentum.fc.client.impl.connection.docbase.DocbaseConnection$8.evaluate(DocbaseConnection.java:1572)

at com.documentum.fc.client.impl.connection.docbase.DocbaseConnection.evaluateRpc(DocbaseConnection.java:1272)

at com.documentum.fc.client.impl.connection.docbase.DocbaseConnection.applyForObject(DocbaseConnection.java:1564)

at com.documentum.fc.client.impl.docbase.DocbaseApi.authenticateUser(DocbaseApi.java:1894)

at com.documentum.fc.client.impl.connection.docbase.DocbaseConnection.authenticate(DocbaseConnection.java:460)

at com.documentum.fc.client.impl.connection.docbase.DocbaseConnection.open(DocbaseConnection.java:140)

at com.documentum.fc.client.impl.connection.docbase.DocbaseConnection.<init>(DocbaseConnection.java:109)

at com.documentum.fc.client.impl.connection.docbase.DocbaseConnection.<init>(DocbaseConnection.java:69)

at com.documentum.fc.client.impl.connection.docbase.DocbaseConnectionFactory.newDocbaseConnection(DocbaseConnectionFactory.java:32)

at com.documentum.fc.client.impl.connection.docbase.DocbaseConnectionManager.createNewConnection(DocbaseConnectionManager.java:202)

at com.documentum.fc.client.impl.connection.docbase.DocbaseConnectionManager.getDocbaseConnection(DocbaseConnectionManager.java:132)

at com.documentum.fc.client.impl.session.SessionFactory.newSession(SessionFactory.java:24)

...

at org.apache.tomcat.util.net.SocketProcessorBase.run(SocketProcessorBase.java:52)

at org.apache.tomcat.util.threads.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1190)

at org.apache.tomcat.util.threads.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:659)

at org.apache.tomcat.util.threads.TaskThread$WrappingRunnable.run(TaskThread.java:63)

at java.base/java.lang.Thread.run(Thread.java:840)

2025-12-08 12:21:16,524 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : redirectToErrorPage : Redirecting to Error Page as Login failed for user : null and exception : {}

com.emc.x3.portal.server.filters.authc.X3OTDSAuthenticationFilter$1: Authentication failed for user null with repository REPO_NAME.

at com.emc.x3.portal.server.filters.authc.X3OTDSAuthenticationFilter.validateTokenAndGetUserId(X3OTDSAuthenticationFilter.java:1167)

at com.emc.x3.portal.server.filters.authc.X3OTDSAuthenticationFilter.onAccessDenied(X3OTDSAuthenticationFilter.java:293)

at org.apache.shiro.web.filter.AccessControlFilter.onAccessDenied(AccessControlFilter.java:133)

at org.apache.shiro.web.filter.AccessControlFilter.onPreHandle(AccessControlFilter.java:162)

at org.apache.shiro.web.filter.PathMatchingFilter.isFilterChainContinued(PathMatchingFilter.java:223)

at org.apache.shiro.web.filter.PathMatchingFilter.preHandle(PathMatchingFilter.java:198)

...

at org.apache.tomcat.util.net.SocketProcessorBase.run(SocketProcessorBase.java:52)

at org.apache.tomcat.util.threads.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1190)

at org.apache.tomcat.util.threads.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:659)

at org.apache.tomcat.util.threads.TaskThread$WrappingRunnable.run(TaskThread.java:63)

at java.base/java.lang.Thread.run(Thread.java:840)

2025-12-08 12:21:16,524 UTC [INFO ] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : Adding the LicenseException to the Session : DM_SESSION_E_AUTH_FAIL

2025-12-08 12:21:16,526 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Identified scheme : https

2025-12-08 12:21:16,526 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Identified server name : d2.domain.com

2025-12-08 12:21:16,526 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Identified server port : 443

2025-12-08 12:21:16,528 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Built server host is : https://d2.domain.com:443

2025-12-08 12:21:16,529 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - o.o.e.logging.slf4j.Slf4JLogLevelHandlers$4 : [EVENT SUCCESS -> /D2/HTTPUtilities] header name=Host, value=d2.domain.com

2025-12-08 12:21:16,530 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - o.o.e.logging.slf4j.Slf4JLogLevelHandlers$4 : [EVENT SUCCESS -> /D2/HTTPUtilities] MaxHeaderValueSize: 8192

2025-12-08 12:21:16,531 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - o.o.e.logging.slf4j.Slf4JLogLevelHandlers$4 : [EVENT SUCCESS -> /D2/HTTPUtilities] validating the input valued2.domain.com

2025-12-08 12:21:16,532 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Identified host : d2.domain.com

2025-12-08 12:21:16,533 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Overall base URL built : https://d2.domain.com/D2

2025-12-08 12:21:16,534 UTC [DEBUG] (https-jsse-nio-8080-exec-5) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : D2 redirecting to errorPage JSP : https://d2.domain.com/D2/errors/authenticationError.jsp

2025-12-08 12:21:16,567 UTC [DEBUG] (https-jsse-nio-8080-exec-26) - o.o.e.logging.slf4j.Slf4JLogLevelHandlers$4 : [EVENT SUCCESS -> /D2/HTTPUtilities] MaxHeaderKeySize: 256

2025-12-08 12:21:16,567 UTC [DEBUG] (https-jsse-nio-8080-exec-26) - o.o.e.logging.slf4j.Slf4JLogLevelHandlers$4 : [EVENT SUCCESS -> /D2/HTTPUtilities] MaxHeaderValueSize: 8192

2025-12-08 12:21:16,568 UTC [DEBUG] (https-jsse-nio-8080-exec-26) - c.e.x.p.s.f.authc.X3OTDSAuthenticationFilter : No LicenseExcepton found on HttpSession hence not Redirectling to License ErrorPage

2025-12-08 12:21:16,571 UTC [DEBUG] (https-jsse-nio-8080-exec-26) - c.e.x.p.s.f.a.X3TrustHttpAuthenticationFilter : Selected Repository : REPO_NAME

2025-12-08 12:21:16,573 UTC [DEBUG] (https-jsse-nio-8080-exec-26) - o.o.e.logging.slf4j.Slf4JLogLevelHandlers$4 : [EVENT SUCCESS -> /D2/HTTPUtilities] MaxHeaderKeySize: 256

2025-12-08 12:21:16,574 UTC [DEBUG] (https-jsse-nio-8080-exec-26) - o.o.e.logging.slf4j.Slf4JLogLevelHandlers$4 : [EVENT SUCCESS -> /D2/HTTPUtilities] MaxHeaderValueSize: 8192

2025-12-08 12:21:16,578 UTC [INFO ] (https-jsse-nio-8080-exec-26) - c.emc.x3.portal.server.X3HttpSessionListener : Expired Http session id : 8531D373A3EA12A398B158AF656E7D20

2025-12-08 12:21:16,578 UTC [DEBUG] (https-jsse-nio-8080-exec-26) - com.emc.x3.server.context.ContextManager : Create a new context manager

...

[tomcat@d2-0 logs]$

As usual, the next step is to check the Repository logs with the authentication trace enabled:

[dmadmin@cs-0 ~]$ cat $DOCUMENTUM/dba/log/$DOCBASE_NAME.log

...

2025-12-08T12:21:16.235912 3567122[3567122] 0101234580c77e96 [AUTH] Entering RPC AUTHENTICATE_USER

2025-12-08T12:21:16.236052 3567122[3567122] 0101234580c77e96 [AUTH] Start Authentication : LOGON_NAME=MYUSERID, DOMAIN_NAME=, OS_LOGON_NAME=tomcat, OS_LOGON_DOMAIN=, ASSUME_USER=0, TRUSTED_LOGIN_ALLOWED=1, PRINCIPAL_AUTH=0, DO_SET_LOCALE=0, RECONNECT=0, CLIENT_TOKEN=[-36, 8, 66, 12, 89, 102, -85, -11, 6, -115, -34, -68, -123, 11, 100]

2025-12-08T12:21:16.236115 3567122[3567122] 0101234580c77e96 [AUTH] Start Authenticate Client Instance

2025-12-08T12:21:16.236215 3567122[3567122] 0101234580c77e96 [AUTH] Start Verify Signature, Client : dfc_327WHMY40Mglbp4taDgajZEM39Lc , Host : d2-0.d2.dctm-ns.svc.cluster.local

2025-12-08T12:21:16.244603 3567122[3567122] 0101234580c77e96 [AUTH] End Verify Signature, Client : dfc_327WHMY40Mglbp4taDgajZEM39Lc , Host : d2-0.d2.dctm-ns.svc.cluster.local

2025-12-08T12:21:16.244657 3567122[3567122] 0101234580c77e96 [AUTH] End Authenticate Client Instance

2025-12-08T12:21:16.303325 3567122[3567122] 0101234580c77e96 [AUTH] Start-AuthenticateUser: ClientHost(d2-0.d2.dctm-ns.svc.cluster.local), LogonName(null), LogonOSName(tomcat), LogonOSDomain(), UserExtraDomain(), ServerDomain()

2025-12-08T12:21:16.303410 3567122[3567122] 0101234580c77e96 [AUTH] Start-AuthenticateUserName:

2025-12-08T12:21:16.303442 3567122[3567122] 0101234580c77e96 [AUTH] dmResolveNamesForCredentials: auth_protocol()

2025-12-08T12:21:16.305698 3567122[3567122] 0101234580c77e96 [AUTH] [DM_USER_E_NOT_DOCUMENTUM_USER]error: "User null does not exist in the docbase"

2025-12-08T12:21:16.305720 3567122[3567122] 0101234580c77e96 [AUTH] End-AuthenticateUserName: dm_user.user_login_domain(), Result: 0

2025-12-08T12:21:16.305730 3567122[3567122] 0101234580c77e96 [AUTH] Not Found dm_user.user_login_name(null), dm_user.user_login_domain()

2025-12-08T12:21:16.519331 3567122[3567122] 0101234580c77e96 [AUTH] Final Auth Result=F, LOGON_NAME=null, AUTHENTICATION_LEVEL=1, OS_LOGON_NAME=tomcat, OS_LOGON_DOMAIN=, CLIENT_HOST_NAME=d2-0.d2.dctm-ns.svc.cluster.local, CLIENT_HOST_ADDR=172.1.1.1, USER_LOGON_NAME_RESOLVED=1, AUTHENTICATION_ONLY=0, USER_NAME=, USER_OS_NAME=null, USER_LOGIN_NAME=null, USER_LOGIN_DOMAIN=, USER_EXTRA_CREDENTIAL[0]=, USER_EXTRA_CREDENTIAL[1]=, USER_EXTRA_CREDENTIAL[2]=e2, USER_EXTRA_CREDENTIAL[3]=, USER_EXTRA_CREDENTIAL[4]=, USER_EXTRA_CREDENTIAL[5]=, SERVER_SESSION_ID=0101234580c77e96, AUTH_BEGIN_TIME=Mon Dec 8 12:21:16 2025, AUTH_END_TIME=Mon Dec 8 12:21:16 2025, Total elapsed time=0 seconds

2025-12-08T12:21:16.519359 3567122[3567122] 0101234580c77e96 [AUTH] Exiting RPC AUTHENTICATE_USER

...

[dmadmin@cs-0 ~]$

There is one thing that is quite strange in these logs. If you look at the beginning, it traces the authentication for “MYUSERID“. But then, in the middle of the process, that user_name becomes “null“. I do not recall seeing that behavior before, so I started investigating what might have caused it.

The account “MYUSERID” existed in the Repository. This issue occurred on the same application as in the previous blog post, but this time in the TEST/QA environment (instead of DEV). The same OTDS and users were present, so my account was definitely there (without duplicates in TEST/QA).

3. Investigating OTDS authentication logsSince the dm_user object had a “user_source” of OTDS, I then checked the OTDS Authentication log file from the JMS. For this Documentum 23.4 version, the log file was “$JMS_HOME/logs/otdsauth.log“. Starting from version 25.4, this log file is located inside “$DOCUMENTUM/dba/log” instead:

[dmadmin@cs-0 ~]$ cat $JMS_HOME/logs/otdsauth.log

...

2025-12-08 11:49:46,106 UTC ERROR [] (https-jsse-nio-9082-exec-36) Thread[https-jsse-nio-9082-exec-36,5,main] java.io.IOException: Unable to tunnel through proxy. Proxy returns "HTTP/1.1 502 Bad Gateway"

at java.base/sun.net.www.protocol.http.HttpURLConnection.doTunneling0(HttpURLConnection.java:2311)

at java.base/sun.net.www.protocol.http.HttpURLConnection.doTunneling(HttpURLConnection.java:2181)

at java.base/sun.net.www.protocol.https.AbstractDelegateHttpsURLConnection.connect(AbstractDelegateHttpsURLConnection.java:185)

at java.base/sun.net.www.protocol.http.HttpURLConnection.getOutputStream0(HttpURLConnection.java:1465)

at java.base/sun.net.www.protocol.http.HttpURLConnection.getOutputStream(HttpURLConnection.java:1436)

at java.base/sun.net.www.protocol.https.HttpsURLConnectionImpl.getOutputStream(HttpsURLConnectionImpl.java:220)

at com.documentum.cs.otds.OTDSAuthenticationServlet.validatePassword(OTDSAuthenticationServlet.java:275)

at com.documentum.cs.otds.OTDSAuthenticationServlet.doPost(OTDSAuthenticationServlet.java:175)

at jakarta.servlet.http.HttpServlet.service(HttpServlet.java:590)

...

at org.apache.tomcat.util.net.NioEndpoint$SocketProcessor.doRun(NioEndpoint.java:1740)

at org.apache.tomcat.util.net.SocketProcessorBase.run(SocketProcessorBase.java:52)

at org.apache.tomcat.util.threads.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1191)

at org.apache.tomcat.util.threads.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:659)

at org.apache.tomcat.util.threads.TaskThread$WrappingRunnable.run(TaskThread.java:61)

at java.base/java.lang.Thread.run(Thread.java:840)

2025-12-08 12:21:16,302 UTC ERROR [] (https-jsse-nio-9082-exec-50) Exception while fetching certificates from jwks url

[dmadmin@cs-0 ~]$

The first error message (11:49) occurred about 30 minutes before the authentication attempt. On the other hand, the last line (12:21) is directly linked to the problem according to its timestamp. This indicates that the Documentum Server was trying to fetch the JWKS certificate. This happens when the OTDS Authentication Servlet is configured with the “auto_cert_refresh=true” parameter (see the “otdsauth.properties” file).

This forces the Documentum Server to contact the OTDS Server in order to retrieve the correct or current SSL certificate to use. However, that request failed. Even though it is not explicitly written, it is easy to deduce that the first error, related to a proxy communication issue, is the root cause.

4. Checking newly added proxy and correcting itAs far as I knew, there should not have been any proxy configured on Documentum, since all components are internal to the customer and located within the same network. However, when checking the startup logs of the JMS, I noticed that a new proxy configuration had recently been added when the Tomcat process restarted less than two hours earlier:

[dmadmin@cs-0 ~]$ grep proxy $JMS_HOME/logs/catalina.out

...

2025-12-08 10:54:56,385 UTC INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Command line argument: -Dhttp.proxyHost=proxy.domain.com

2025-12-08 10:54:56,385 UTC INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Command line argument: -Dhttp.proxyPort=2010

2025-12-08 10:54:56,385 UTC INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Command line argument: -Dhttps.proxyHost=proxy.domain.com

2025-12-08 10:54:56,385 UTC INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Command line argument: -Dhttps.proxyPort=2011

...

[dmadmin@cs-0 ~]$

After checking with the relevant teams, it turned out that this issue was not really related to Documentum itself. Someone had simply restarted the JMS after adding proxy settings as new JVM parameters while testing an external service that required internet access. Yes, directly in TEST/QA without validating in DEV first – it happens apparently.

However, since no exceptions were configured through the no_proxy setting (“-Dhttp.nonProxyHosts” JVM parameter), it meant that 100% of the requests initiated by the JVM were forwarded to the proxy. That proxy had no knowledge of the OTDS server (which is expected), so the communication simply failed.

After correcting the proxy configuration (either by removing it or by adding all internal domains to the no_proxy setting), the JVM was able to communicate with OTDS again. As a consequence, the D2 SSO started working successfully and the environment was back “online” for all testers. These two blog posts clearly demonstrate that just because D2 displays an error, it doesn’t mean that the real root cause is obvious. Careful investigation and analysis of the log files is always essential.

L’article Dctm – Another DM_LICENSE_E_INVALID_LICENSE error but caused by JMS this time est apparu en premier sur dbi Blog.

Credential Errors (OGG-15409) with GoldenGate Migration Utility

The GoldenGate migration utility provided by Oracle allows you to quickly upgrade your classic architecture into GoldenGate 26ai with Microservices Architecture. But even after some updates, it still has a few bugs, as I explained in a previous blog post.

One of them can lead to an OGG-15409 error during the migration. This error will not appear when running the migration tool in dryrun mode. You might then be faced with this issue only when doing the real migration. Here is the exact error:

ERROR: Unable to patch EXTRACT EXT, response is HTTP Status-Code 400: Bad Request..

[ERROR] OGG-15409 - Alias 'ggadmin_alias' not found in credential store domain 'OracleGoldenGate'.

Extract EXT Process Definitions patched.The first step is to understand what is causing the issue. For this, you need to understand how the GoldenGate migration utility works.

When migrating extracts (or replicats), GoldenGate will make API calls to the new Microservices Architecture administration service to register the extract (or replicat). Once created, it will alter it with a PATCH request to update the credentials used.

We can see it in the restapi.log:

{"context":{"verb":"PATCH","uri":"/services/v2/extract/EXT",...},"content":{"credentials":{"alias":"ggadmin_alias","domain":"OracleGoldenGate"}},...}Unfortunately, once the migration is done, you cannot re-run the migration. You will need to fix this manually.

But since this is the only post-migration task made on extracts and replicats, it is rather easy to do. You can just create the aliases first, and call the REST API to alter all extracts and replicats. In Python, using the client I presented in a previous blog post, it would look like the following. First, create the client connection.

from oggrestapi import OGGRestAPI

ogg_client = OGGRestAPI(url='https://vmogg:7810', username='ogg', password='***')Then, check the content of the extract (or replicat) using the retrieve_extract (or retrieve_replicat) method. For the moment, we don’t see any credentials key.

# This retrieves all the configuration of an extract, except for the configuration file

>>> {k:v for k,v in ogg_client.retrieve_extract('EXT').items() if k != 'config'}

{'$schema': 'ogg:extract', 'targets': [{'name': 'aa', 'path': 'source', 'sizeMB': 500, ...}], 'description': 'dbi blog migration', 'source': 'tranlogs', 'type': 'Integrated'}Then, create the alias(es) with the create_alias method.

ogg_client.create_alias(

alias='ggadmin_alias',

domain='OracleGoldenGate',

data={

"userid":"ggadmin@vmora:1521/DB",

"password": "***"

}

)And finally, alter the extracts with the update_extract method.

ogg_client.update_extract(

extract='EXT',

data={

"alias": "ggadmin_alias",

"domain": "OracleGoldenGate"

}

)If you had the issue with a replicat, the syntax is exactly the same, with the update_replicat method.

ogg_client.update_replicat(

replicat='REP',

data={

"alias": "ggadmin_alias",

"domain": "OracleGoldenGate"

}

)You can check that the credentials are there by reusing the retrieve_extract (or retrieve_replicat) method. This time, we see the credentials key !

>>> {k:v for k,v in ogg_client.retrieve_extract('EXT').items() if k != 'config'}

{'$schema': 'ogg:extract', 'credentials': {'alias': 'ggadmin_alias', 'domain': 'OracleGoldenGate'}, 'targets': [{'name': 'aa', 'path': 'source', 'sizeMB': 500, ...}], 'description': 'dbi blog migration', 'source': 'tranlogs', 'type': 'Integrated', ...}For some reason, the credentials of the source setup will not always be migrated. If you don’t have too many aliases, I would suggest creating the aliases in the target environment. This way, you know they are working even before attempting the migration. This should definitely be part of your new deployment tests.

L’article Credential Errors (OGG-15409) with GoldenGate Migration Utility est apparu en premier sur dbi Blog.

Remove grant to public in Oracle databases

The Center for Internet Security publishes the “CIS Oracle database 19c Benchmark” with recommendations to enhance the security of Oracle databases.

One type of recommendations is to remove grant execute to public (chapter 5.1.1.1-5.1.1.7 Public Privileges). There is a list of powerful SYS packages. And for security reasons, only users that really need this functionality should have access to it. But per default, it is granted to public and all users can use it.

In theory, to fix that is easy, e.g.

REVOKE EXECUTE ON DBMS_LDAP FROM PUBLIC;But is that really a good idea?

Who is using an object from another schema?If the object is used in a program unit, a named PL/SQL block (package, function, procedure, trigger), you can see the dependency in the view dba_dependencies.

select distinct owner from dba_dependencies

where referenced_name='DBMS_LDAP' and owner<>'SYS'

order by 2,1;

And for these objects, the users already have a direct grant for it. So, remove of the public grant does not affect these user-objects.

But wait! Rarely used, but there are named blocks with invokers right’s (create procedure procname AUTHID CURRENT_USER is…) . See How Roles Work in PL/SQL Blocks

select owner, object_name from dba_procedures where authid='CURRENT_USER';

In this case the user can also access objects used in program units he has granted via a role. You have to check which users have access to these program units. These users are potentially affected by the change!

For objects used outside of above program units: If a user has a direct grant, or an indirect grant via a role to the object, removing the grant to public does not affect the work of this user with these objects.

So, what about the other users without direct/indirect grants to the object (except “public”)? How can we see if above mentioned objects are used (e.g. from external code in a Perl script or an application server connecting to the database)?

To see the usage of an object, we can use unified auditing and create an audit policy for the object.

create audit policy CIS_CHECK_USAGE

actions

execute on sys.dbms_ldap

when 'SYS_CONTEXT(''USERENV'', ''CURRENT_USER'') != ''SYS''' EVALUATE PER STATEMENT;

audit policy CIS_CHECK_USAGE;

alter audit policy cis_check_usage add actions EXECUTE on SYS.DBMS_LOB;

alter audit policy cis_check_usage add actions ...

Hint: Unified auditing can also be used if the Oracle binary is not relinked for unified audit (the relink only deactivates traditional auditing, unified auditing is always active)

To automate above steps, you can do it dynamically with the Perl script below (run it with $ORACLE_HOME/perl/bin/perl, so the required Oracle modules are present):

use DBI;

my $dbh = DBI->connect('dbi:Oracle:', '', '',{ PrintError => 1, ora_session_mode=>2 });

my @pdblist;

my $sth=$dbh->prepare(q{select PDB_NAME from cdb_pdbs where pdb_name<>'PDB$SEED' union select 'CDB$ROOT' from dual});

$sth->execute();

while (my @row = $sth->fetchrow_array) {

push(@pdblist, $row[0]);

}

foreach my $pdb (@pdblist){

# switch PDB

print "PDB=$pdb\n";

$dbh->do("alter session set container=$pdb");

# create cis_check_usage

print q{ create audit policy cis_check_usage actions all on sys.AUD$ when 'SYS_CONTEXT(''USERENV'', ''CURRENT_USER'') != ''SYS''' EVALUATE PER STATEMENT}."\n";

$dbh->do(q{ create audit policy cis_check_usage actions all on sys.AUD$ when 'SYS_CONTEXT(''USERENV'', ''CURRENT_USER'') != ''SYS''' EVALUATE PER STATEMENT});

$dbh->do(q{ audit policy cis_check_usage});

# add execute to public

my $sql=q{

SELECT PRIVILEGE||' on '||owner||'.'||table_name FROM DBA_TAB_PRIVS WHERE GRANTEE='PUBLIC' AND PRIVILEGE='EXECUTE' AND TABLE_NAME IN (

'DBMS_LDAP','UTL_INADDR','UTL_TCP','UTL_MAIL','UTL_SMTP','UTL_DBWS','UTL_ORAMTS','UTL_HTTP','HTTPURITYPE',

'DBMS_ADVISOR','DBMS_LOB','UTL_FILE',

'DBMS_CRYPTO','DBMS_OBFUSCATION_TOOLKIT', 'DBMS_RANDOM',

'DBMS_JAVA','DBMS_JAVA_TEST',

'DBMS_SCHEDULER','DBMS_JOB',

'DBMS_SQL', 'DBMS_XMLGEN', 'DBMS_XMLQUERY','DBMS_XMLSTORE','DBMS_XMLSAVE','DBMS_AW','OWA_UTIL','DBMS_REDACT',

'DBMS_CREDENTIAL'

)};

$sth=$dbh->prepare("$sql");

$sth->execute();

while (my @result = $sth->fetchrow_array) {

print "alter audit policy cis_check_usage add actions $result[0]\n";

$dbh->do("alter audit policy cis_check_usage add actions $result[0]");

}

}

After some days/weeks, you can evaluate the usage of dbms_ldap or other objects audited by the cis_check_usage policy

select dbusername, current_user, object_schema||'.'||object_name as object,

sql_text, system_privilege_used,

system_privilege, unified_audit_policies, con_id , event_timestamp

from cdb_unified_audit_trail

where unified_audit_policies like '%CIS_CHECK_USAGE%';

With this query, we see the usage of the objects we audited with the CIS_CHECK_USAGE policy. If there are no rows, check if you really enabled the policy (select * from audit_unified_enabled_policies where policy_name='CIS_CHECK_USAGE';)

With the next query, we exclude the objects per user that can be accessed by a direct grant or a grant via a role, so, a revoke from public will not affect this user.

select distinct current_user, action_name, object_schema, object_name, con_id

from cdb_unified_audit_trail a

where unified_audit_policies like '%CIS_CHECK_USAGE%'

and current_user not in (

select grantee from cdb_tab_privs -- direct grant

where owner=a.object_schema and table_name=a.object_name and con_id=a.con_id

union all

select r.grantee from cdb_role_privs r, cdb_tab_privs t -- grant via role

where r.granted_role=t.grantee and r.con_id=t.con_id

and r.grantee=a.current_user and t.owner=a.object_schema

and t.table_name=a.object_name and r.con_id=a.con_id

);

And what is left, needs attention.

Sometimes the objects are used by a background process, e.g. if you see the object_name DBMS_SQL, but in sql_text it is not used, then the user probably does not need it. But if it is present in sql_text, then the user definitely needs a grant. I recommend to grant the object via a role, so it behaves as before, the user can use it directly, but not in procedures/functions/packages.

create role cis_dbms_sql ;

grant execute on sys.dbms_sql to cis_dbms_sql;

grant cis_dbms_sql to user1;

Then pragmatically, remove the execute rights from public on a test system and check if the application still works as expected. Generate the revoke commands dynamically, and do not forget to also dynamically generate an undo script in case of problems:

SELECT 'revoke '||PRIVILEGE||' on '||owner||'.'||table_name||' from PUBLIC;'

FROM DBA_TAB_PRIVS

WHERE GRANTEE='PUBLIC' AND PRIVILEGE='EXECUTE' AND TABLE_NAME IN (

'DBMS_LDAP',' UTL_INADDR' ,'UTL_TCP', 'UTL_MAIL', 'UTL_SMTP', 'UTL_DBWS',

'UTL_ORAMTS','UTL_HTTP','HTTPURITYPE',

'DBMS_ADVISOR','DBMS_LOB','UTL_FILE',

'DBMS_CRYPTO','DBMS_OBFUSCATION_TOOLKIT', 'DBMS_RANDOM',

'DBMS_JAVA','DBMS_JAVA_TEST',

'DBMS_SCHEDULER','DBMS_JOB',

'DBMS_SQL', 'DBMS_XMLGEN', 'DBMS_XMLQUERY','DBMS_XMLSTORE','DBMS_XMLSAVE','DBMS_AW','OWA_UTIL','DBMS_REDACT',

'DBMS_CREDENTIAL'

);

SELECT 'grant '||PRIVILEGE||' on '||owner||'.'||table_name||' to PUBLIC;'

FROM DBA_TAB_PRIVS

WHERE GRANTEE='PUBLIC' AND PRIVILEGE='EXECUTE' AND TABLE_NAME IN (

'DBMS_LDAP',' UTL_INADDR' ,'UTL_TCP', 'UTL_MAIL', 'UTL_SMTP', 'UTL_DBWS',

'UTL_ORAMTS','UTL_HTTP','HTTPURITYPE',

'DBMS_ADVISOR','DBMS_LOB','UTL_FILE',

'DBMS_CRYPTO','DBMS_OBFUSCATION_TOOLKIT', 'DBMS_RANDOM',

'DBMS_JAVA','DBMS_JAVA_TEST',

'DBMS_SCHEDULER','DBMS_JOB',

'DBMS_SQL', 'DBMS_XMLGEN', 'DBMS_XMLQUERY','DBMS_XMLSTORE','DBMS_XMLSAVE','DBMS_AW','OWA_UTIL','DBMS_REDACT',

'DBMS_CREDENTIAL'

);

It has to be run in each PDB and CDB$ROOT.

If all works as expected, then it is fine.

Installation of patches and new componentsBut keep that in mind if you want to install something later. It may fail. For example, install an rman catalog:

RMAN> create catalog;

create catalog;

error creating dbms_rcvcat package body

RMAN-00571: ===========================================================

RMAN-00569: =============== ERROR MESSAGE STACK FOLLOWS ===============

RMAN-00571: ===========================================================

RMAN-06433: error installing recovery catalog

RMAN Client Diagnostic Trace file : /u01/app/oracle/diag/clients/user_oracle/RMAN_1732619876_110/trace/ora_rman_635844_0.trc

To create a valid rman catalog, you need to grant the execute right for UTL_HTTP, DBMS_LOB, DBMS_XMLGEN and DBMS_SQL directly to the rman user. Strange for me: it does not work if you grant it to a role (e.g. recovery_catalog_owner), but it works with a grant to public.

My recommendation to install new softare or patches is:

- Run the undo-script mentioned above (grant execute to public)

- Apply the Oracle or application patch or new application installation

- Check for invalid objects

- Run the hardening-script (revoke execute from public)

- Check for additional invalid objects and determine the missing grants

- Extend your hardening script with the required grants and re-run it.

Generally, the CIS hardening about revoking execute from public is possible. But it is very dangerous that the functionality of the application could be compromised. Especially with components that are used very rarely, this could only be noticed very late at best, e.g. in the case of end-of-year processing.

L’article Remove grant to public in Oracle databases est apparu en premier sur dbi Blog.

ECM: Low-code, high value

First, for those unfamiliar with the term, low-code is a software development approach that uses visual, drag-and-drop interfaces and pre-built components to create applications, rather than manual coding.

Low-code doesn’t always have a good reputation in the IT world because “real” programmers write code. But why reinvent the wheel?

I have worked in the ECM world for more than fifteen years and have seen many organizations rely on heavy custom development to adapt systems to their business needs.

It is often a work habit, but sometimes, it can also be a way of making our work more complicated than it needs to be. Complicating things allows to maintain control over customers (or management for internals) and their need for modifications or maintenance.

However, the reality is that low-code doesn’t mean low competencies. It requires a thorough understanding of existing features and how to optimize them.

This is especially true in a cloud environment, where we cannot control the update or upgrade cycle. Updates are frequently pushed (monthly for M-Files), bringing architectural changes, API adjustments, performance optimizations, and security enhancements. Custom code poses a permanent risk of unexpected breakdowns, compatibility issues, authentication errors, and API errors. Sometimes these issues occur silently after an update, and root cause analysis can be difficult.

What worked last month might suddenly stop working tomorrow, and you may not even know until a critical business process fails.

Every custom development requires regression testing, debugging after updates, code refactoring, documentation and QA validation. Since many companies don’t keep the original developer on staff, the risk grows every year.

In contrast, using embedded modules, like Compliance Kit or Properties Calculator for M-Files. They evolve with the platform, not against it.

Another cost factor is security and compliance responsibility. Adding custom code means adding a new piece of software to your ecosystem. You become responsible for its security and must ensure that it complies with data protection rules. Auditors may request documentation and evidence of validation.

On the other hand, native configuration tools are secure by design and part of the editor’s audited code base.

The rise of low-code ECM platformsTo address these issues, modern ECM vendors are moving toward low-code or configuration-driven models.

Low-code ECM platforms focus on:

- configuration instead of custom code

- rules instead of scripts

- metadata-driven logic instead of hard-coded business rules

- cloud compatibility from the ground up

This shift dramatically reduces implementation effort, accelerates time to value, and improves overall system stability.

When low-code matters the most

Low-code ECM becomes critical in situations such as:

- cloud deployments

- rapid prototyping and agile implementations

- regulated environments with strict audit requirements