Jeremy Schneider

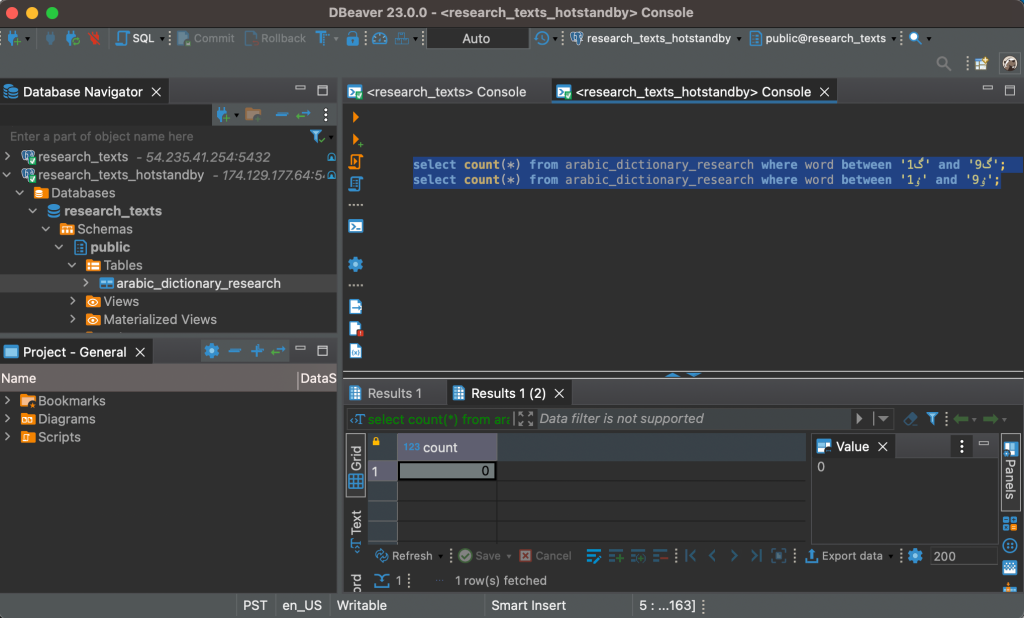

How Blocking-Lock Brownouts Can Escalate from Row-Level to Complete System Outages

This article is a shortened version. For the full writeup, go to https://github.com/ardentperf/pg-idle-test/tree/main/conn_exhaustion

This test suite demonstrates a failure mode when application bugs which poison connection pools collide with PgBouncers that are missing peer config and positioned behind a load balancer. PgBouncer’s peering feature (added with v1.19 in 2023) should be configured if multiple PgBouncers are being used with a load balancer – this feature prevents the escalation demonstrated here.

The failures described here are based on real-world experiences. While uncommon, this failure mode has been seen multiple times in the field.

Along the way, we discover unexpected behaviors (bugs?) in Go’s database/sql (or sqlx) connection pooler with the pgx client and in Postgres itself.

Sample output: https://github.com/ardentperf/pg-idle-test/actions/workflows/test.yml

The Problem in BriefGo’s database/sql allows connection pools to become poisoned by returning connections with open transactions for re-use. Transactions opened with db.BeginTx() will be cleaned up, but – for example – conn.ExecContext(..., "BEGIN") will not be cleaned up. PR #2481 proposes some cleanup logic in pgx for database/sql connection pools (not yet merged); I tested the PR with this test suite. The PR relies on the TxStatus indicator in the ReadyForStatus message which Postgres sends back to the client as part of its network protocol.

A poisoned connection pool can cause an application brownout since other sessions updating the same row wait indefinitely for the blocking transaction to commit or rollback its own update. On a high-activity or critical table, this can quickly lead to significant pile-ups of connections waiting to update the same locked row. With Go this means context deadline timeouts and retries and connection thrashing by all of the threads and processes that are trying to update the row. Backoff logic is often lacking in these code paths. When there is a currently running SQL (hung – waiting for a lock), pgx first tries to send a cancel request and then will proceed to a hard socket close.

If PgBouncer’s peering feature is not enabled, then cancel requests load-balanced across multiple PgBouncers will fail because the cancel key only exists on the PgBouncer that created the original connection. The peering feature solves the cancel routing problem by allowing PgBouncers to forward cancel requests to the correct peer that holds the cancel key. This feature should be enabled – the test suite demonstrates what happens when it is not.

Postgres immediately cleans up connections when it receives a cancel request. However, Postgres does not clean up connections when their TCP sockets are hard closed, if the connection is waiting for a lock. As a result, Postgres connection usage climbs while PgBouncer continually opens new connection that block on the same row. The app’s poisoned connection pool quickly leads to complete connection exhaustion in the Postgres server.

Existing connections will continue to work, as long as they don’t try to update the row which is locked. But the row-level brownout now becomes a database-level brownout – or perhaps a complete system outage (once the Go database/sql connection pool is exhausted) – because postgres rejects all new connection attempts from the application.

Result: Failed cancels → client closes socket → backends keep running → CLOSE_WAIT accumulates → Postgres hits max_connections → system outage

- The Problem in Brief

- Table of Contents

- Architecture

- The Test Scenarios

- Test Results

- Detection and Prevention

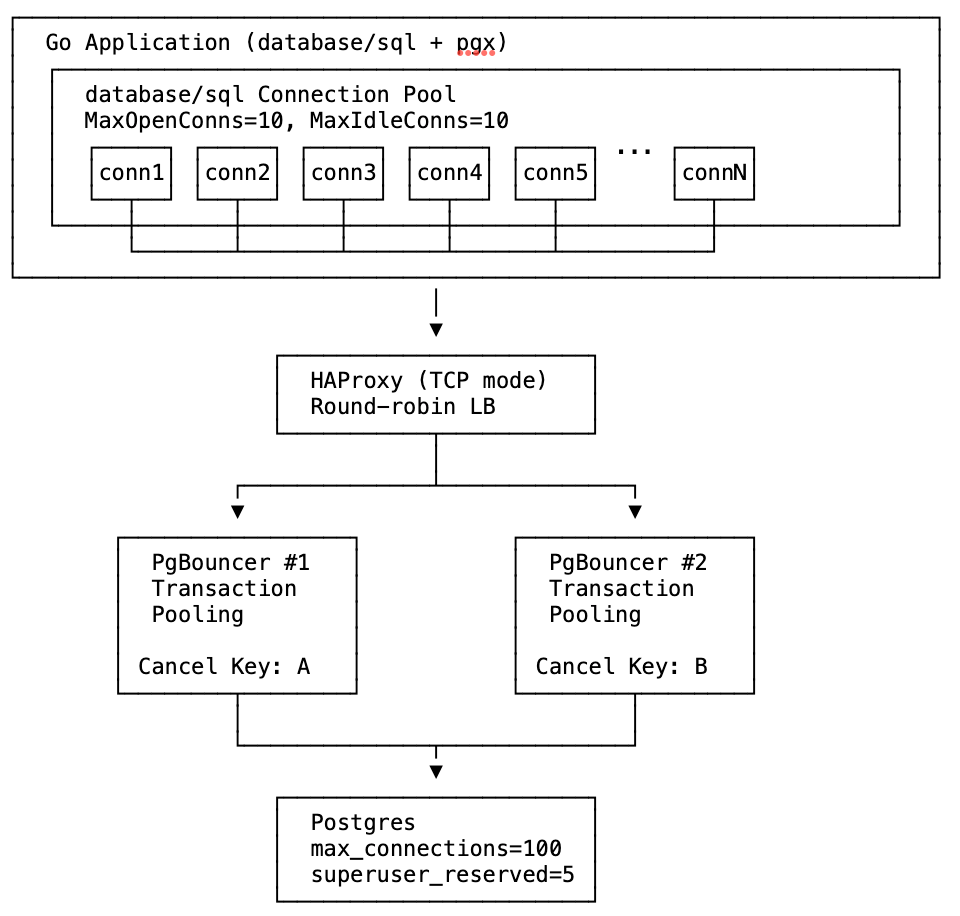

The test uses Docker Compose to create this infrastructure with configurable number of PgBouncer instances.

The Test Scenariostest_poisoned_connpool_exhaustion.sh accepts three parameters: <num_pgbouncers> <poison|sleep> <peers|nopeers>

In this test suite:

- The failure is injected 20 seconds after the test starts.

- Idle connections are aborted and rolled back after 20 seconds.

- Postgres is configured to abort and rollback any and all transactions if they are not completed within 40 seconds. Note that the

transaction_timeoutsetting (for total transaction time) should be used cautiously, and is available in Postgres v17 and newer.

TPS is the best indicator of actual application impact. It’s important to notice that PgBouncer peering does not prevent application impact from either poisoned connection pools or sleeping sessions. The section below titled “Detection and Prevention” has ideas which address the actual root cause and truly prevent application impact.

After the lock is acquired at t=20, TPS drops from ~700 to near zero in all cases as workers block on the locked row held by the open transaction.

Sleep mode (orange/green lines): Around t=40, Postgres’s idle_in_transaction_session_timeout (20s) fires and kills the blocking session. TPS recovers to ~600-700.

Poison mode (red/purple/blue lines): The lock-holding connection is never idle—it’s constantly being picked up by workers attempting queries—so the idle timeout never fires. TPS remains near zero until Postgres’s transaction_timeout (40s) fires at t=60, finally terminating the long-running transaction and releasing the lock.

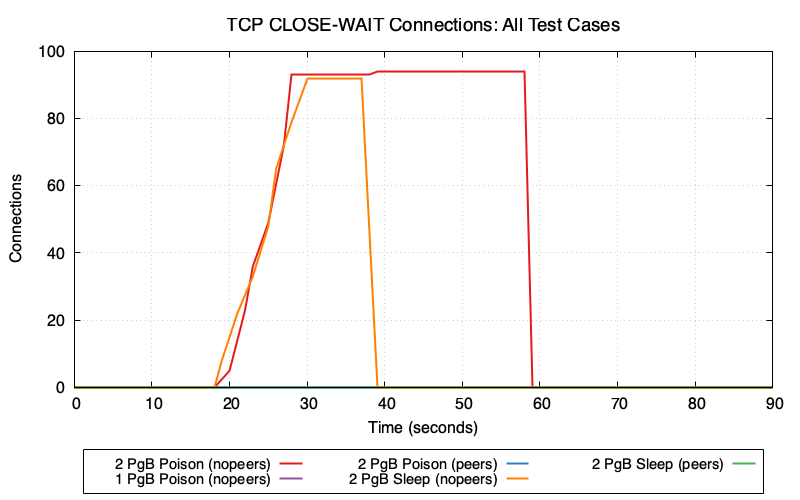

2 PgBouncers (nopeers) (red/orange lines): CLOSE_WAIT connections accumulate rapidly because:

- Cancel request goes to wrong PgBouncer → fails

- Client gives up and closes socket

- Server backend is still blocked on lock, hasn’t read the TCP close

- Connection enters CLOSE_WAIT state on Postgres

In poison mode (red), CLOSE_WAIT remains at ~95 until transaction_timeout fires at t=60. In sleep mode (orange), CLOSE_WAIT clears around t=40 when idle_in_transaction_session_timeout fires.

1 PgBouncer and peers modes (purple/blue/green lines): Minimal or zero CLOSE_WAIT because cancel requests succeed—either routing to the single PgBouncer or being forwarded to the correct peer.

Connection Pool Wait Time vs PgBouncer Client WaitGo’s database/sql pool tracks how long goroutines wait to acquire a connection (db.Stats().WaitDuration). PgBouncer tracks cl_waiting—clients waiting for a server connection. These metrics measure wait time at different layers of the stack.

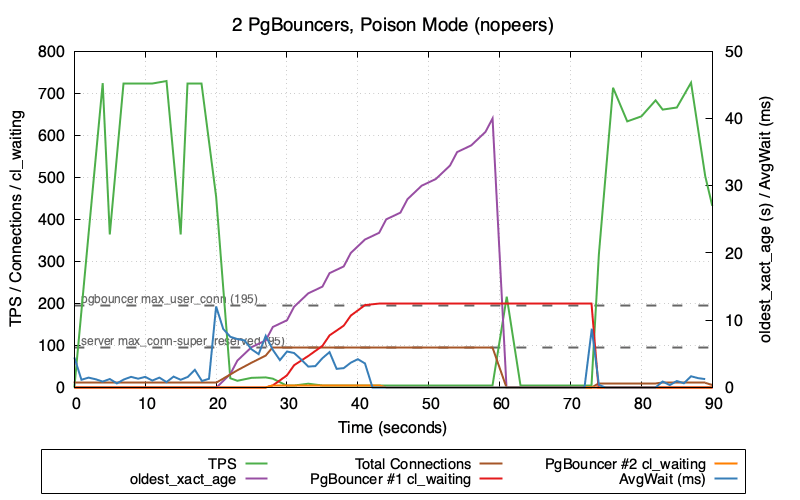

This graph shows 2 PgBouncers in poison mode (nopeers)—the worst-case scenario:

- TPS (green) crashes to near zero and stays there until

transaction_timeoutfires at t=60 - oldest_xact_age (purple) climbs steadily from 0 to 40 seconds

- Total Connections (brown) climb rapidly after poison injection at t=20 as failed cancels leave backends in CLOSE_WAIT

- Once Postgres hits

max_connections - superuser_reserved_connections (95), new connections are refused - PgBouncer #1 cl_waiting (red) and PgBouncer #2 cl_waiting (orange) then spike as clients queue up waiting for available connections

Note the gap between when transaction_timeout fires (t=60, visible as oldest_xact_age dropping to 0) and when TPS fully recovers. TPS recovery correlates with cl_waiting dropping back to zero—PgBouncer needs time to clear the queue of waiting clients and re-establish healthy connection flow. This recovery gap only occurs in nopeers mode; the TPS comparison graph shows that peers mode recovers immediately when the lock is released because connections never exhaust and cl_waiting stays at zero.

Why is AvgWait (blue) so low despite the system being in distress? The poisoned connection (holding the lock) continues executing transactions without blocking—it already holds the lock, so its queries succeed immediately. This one connection cycling rapidly through the pool with sub-millisecond wait times heavily skews the average lower, masking the fact that other connections are blocked.

The cl_waiting metric is collected as cnpg_pgbouncer_pools_cl_waiting from CloudNativePG. See CNPG PgBouncer metrics.

Monitoring and Alerting:

Alert on:

- Most Important:

cnpg_backends_max_tx_duration_secondsshowing transactions open for longer than some threshold cnpg_backends_totalshowing established connections at a high percentage ofmax_connections- Number of backends waiting on locks over some threshold

-- Count backends waiting on locks

SELECT count(*) FROM pg_stat_activity WHERE wait_event_type = 'Lock';

Prevention Options:

Options to prevent the root cause (connection pool poisoning):

- Find and fix connection leaks in the application – ensure all transactions are properly committed or rolled back

- Use

OptionResetSessioncallback – automatically discard leaked connections (see below) - Fix at the driver level – PR #2481 proposes automatic detection in pgx (not yet merged)

Options to prevent the escalation from row-level brownout to system outage:

- Enable PgBouncer peering – if using multiple PgBouncers behind a load balancer, configure the

peer_idand[peers]section so cancel requests are forwarded to the correct instance (see PgBouncer documentation). This prevents connection exhaustion but does not prevent the TPS drop from lock contention. - Use session affinity (sticky sessions) in the load balancer based on client IP – ensures cancel requests route to the same PgBouncer as the original connection (see HAProxy Session Affinity example below)

Options to limit the duration/impact:

- Set appropriate timeout defaults – configure system-wide timeouts to automatically terminate problematic sessions:

idle_in_transaction_session_timeout– terminates sessions idle in a transaction (e.g.,5min)transaction_timeout(Postgres 17+) – use caution; limits total transaction duration regardless of activity (e.g.,30min)

Potential Postgres Enhancement:

This would not address the root cause, but Postgres could better handle CLOSE_WAIT accumulation by checking socket status while waiting for locks. Since Postgres already checks for interrupts periodically (which is why cancels work), it’s possible that similar logic could detect forcibly closed sockets and clean up blocked backends sooner.

Results Summary, Understanding the Layers Leading to the System Outage, Unique Problems, and more - available in the full writeup at https://github.com/ardentperf/pg-idle-test/tree/main/conn_exhaustion

Postgres Booth at PASS Data Community Summit

PASS Data Community Summit 2025 wrapped up last week. This conference originated 25 years ago with the independent, user-led, not-for-profit “Professional Association for SQL Server (PASS)” and the annual summit in Seattle continues to attract thousands of database professionals each year. After the pandemic it was reorganized and broadened as a “Data Community” event, including a Postgres track.

Starting in 2023, volunteers from the Seattle Postgres User Group have staffed a postgres community booth on the exhibition floor. We provide information about Postgres User Groups around the world and do our best to answer all kinds of questions people have about Postgres. The booth consistently gets lots of traffic and questions.

The United States PostgreSQL Association has generously supplied one of their booth kits each year, which has a banner/background and some booth materials like stickers and a map with many user groups and a “welcome to postgres” handout and postgres major version handouts. We supplement with extra pins and stickers and printouts like the happiness hints I’ve put together, a list of common extensions that Rox made, and a list of Postgres events that Lloyd made. Every year, we also bring leftover Halloween candy that we want to get rid of and we put it in a big bowl on the table.

One of the top questions people ask is how and where they can learn more about Postgres. Next year I might just print out the Links section from my blog, which has a bunch of useful free resources. Another idea I have is for Redgate and EnterpriseDB – I think both of these companies have paid training but also give free access to a few introductory classes – it would be nice if they made a small card with a link to their free training. I think we could have a stack of these cards at our user groups and at the PASS booth. The company can promote paid training, but the free content can benefit anyone even if they aren’t interested in the paid training. I might also reach out to other companies who have paid training and see if they’d be willing to open up a bit of pre-recorded introductory content for free. (Data Egret? Creston Jamison?) Come to think of it, a list of weekly newsletters and podcasts might also be a great thing to print on a handout or a card – Postgres Weekly, postgres.fm, Talking Postgres, Scaling Postgres, etc.

The disk in the picture below is not from our booth; it’s an original SQL server installation disk and the crew over at Fortified apparently found a whole box of them on eBay and were handing them out over at their booth. As a result, I overheard someone explaining to another conference attendee what is a “floppy disk” and why does the bottom open. (In the background, on our booth table, you can see that I “fixed” the DocumentDB sticker…)

This year, I took my home office white board and drove it down to the convention center along with a bunch of magnets. Rick Lowe’s wife Becka picked up two wall-mount metal mesh file organizers and four S-hooks, which we hung on the white board and filled with handouts that Ben Chobot printed on his home printer. Thank you! This worked really well and you can see it in the picture below. It freed up space on the table for other things like pins and stickers, the raffle, and a very cool elephant that Lloyd brought.

As always: a huge shout-out to our local volunteers! From the left in the picture below: Lloyd Albin, me, Ben Chobot, Deon Gill, Rick Lowe, and… Pavlo Golub who is not technically local but joined us for our volunteer dinner/hangout! Harry Pierson missed our volunteer dinner but he’s on the right side in the booth picture above.

We raffled off a signed copy of Ryan Booz and Grant Fritchey’s new book: Introduction to PostgreSQL for the data professional. Congratulations to our winner – Tomi from Croatia!

Most of my time was at the booth. I had one speaking session on Friday, and spoke about CloudNativePG Quorum Failover. I originally intended to just expand the talk from KubeCon the week before. But I ended up heavily re-writing after realizing that out of 242 sessions at PASS there were only 6 that even mentioned Kubernetes. I ended up spending the first half of the talk with a simple introduction to containers and Kubernetes – a couple slides and then a terminal window with docker and kind to demonstrate the basics.

Finally, it was great fun to catch up with some old Oracle friends like Kellyn Gorman, Gustavo René Antúnez, Shane Borden and Gleb Otochkin. And of course it was great to see Lukas Fitl and Ryan Booz and Grant Fritchey. These are all solid, amazing people and if you ever see them at a conference then don’t hesitate to introduce yourself and strike up a conversation!

I enjoy traveling for conferences but I’m still in a season of limited travel for family reasons (and probably will be for awhile) – so I look forward to any time Postgres people visit Seattle. Helping organize the Postgres booth for PASS is a bit of work, but it’s worthwhile for the chance to connect. I look forward to seeing the Postgres track grow at PASS Data Community Summit!

KubeCon 2025: Bookmarks on Memory and Postgres

Just got home from KubeCon.

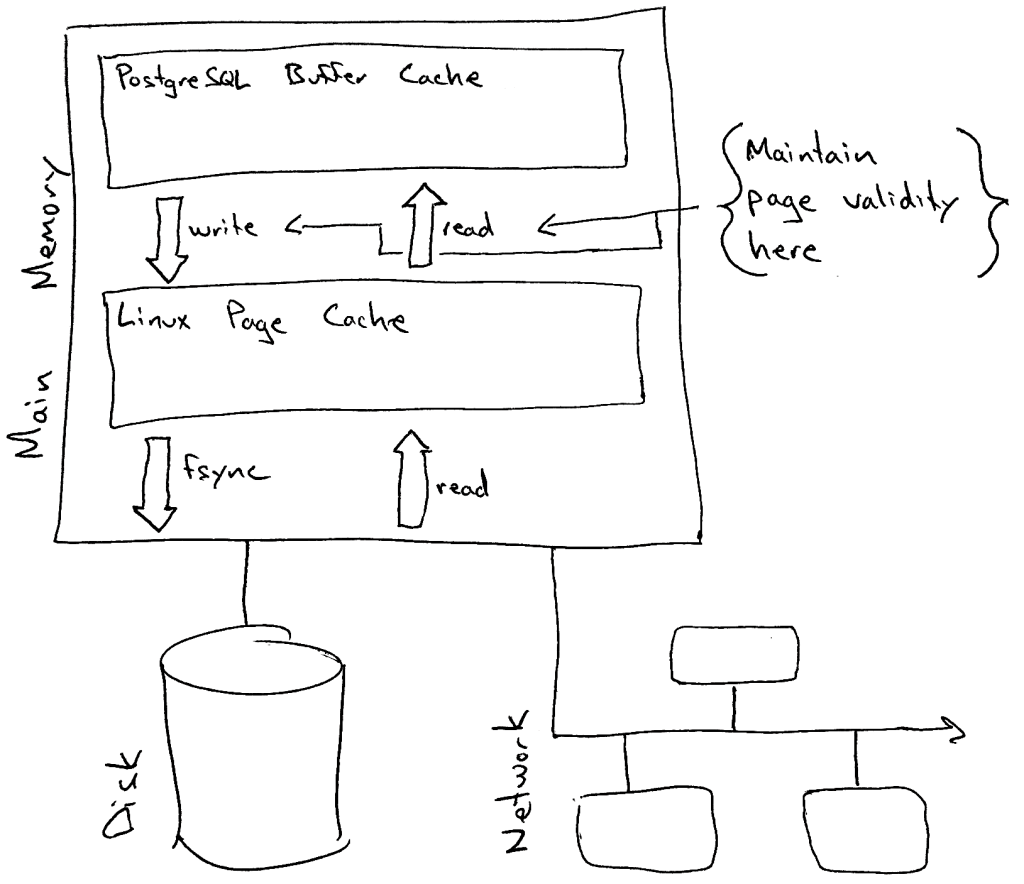

One of my big goals for the trip was to make some progress in a few areas of postgres and kubernetes – primarily around allowing more flexible use of the linux page cache and avoiding OOM kills with less hardware overprovisioning. When I look at Postgres on Kubernetes, I think there are idle resources (both memory and CPU) on the table with the current Postgres deployment models that generally use guaranteed QoS.

Ultimately this is about cost savings. I think we can still run more databases on less hardware without compromising the availability and reliability of our database services.

The trip was a success, because I came home with lots of reading material and homework!

Putting a few bookmarks here, mostly for myself to come back to later:

- key place for discussion is sig-node

- documentation on node-pressure eviction https://kubernetes.io/docs/concepts/scheduling-eviction/node-pressure-eviction/

- eviction signal thresholds can be customized

- it looks like priority classes give a lot of control over the order in which pods are evicted

- documentation on priority classes https://kubernetes.io/docs/concepts/scheduling-eviction/pod-priority-preemption/

- cgroups v2 memory controller documentation https://docs.kernel.org/admin-guide/cgroup-v2.html#memory

- long running github issue about pod evictions due to kubernetes (incorrectly?) interpreting active page cache as working memory that won’t be reclaimed https://github.com/kubernetes/kubernetes/issues/43916

- new feature MemoryQOS – still alpha (feature gate off-by-default)

- KEP https://github.com/kubernetes/enhancements/tree/master/keps/sig-node/2570-memory-qos/

- currently stalled – related message from Linux Kernel Mailing Lists https://lkml.org/lkml/2023/6/1/1300

- “Future: memory.high can be used to implement kill policies in for userspace OOMs, together with Pressure Stall Information (PSI). When the workloads are in stuck after their memory usage levels reach memory.high, high PSI can be used by userspace OOM policy to kill such workload(s).”

- Nov 2021 blog https://kubernetes.io/blog/2021/11/26/qos-memory-resources/

- May 2023 blog https://kubernetes.io/blog/2023/05/05/qos-memory-resources/

- Brief mention in docs https://kubernetes.io/docs/concepts/workloads/pods/pod-qos/#memory-qos-with-cgroup-v2

- KEP https://github.com/kubernetes/enhancements/tree/master/keps/sig-node/2570-memory-qos/

- metrics added to CAdvisor for both active and inactive page cache https://github.com/google/cadvisor/pull/3445

- metric added for PSI https://kubernetes.io/blog/2025/09/04/kubernetes-v1-34-introducing-psi-metrics-beta/

- homework – taking a closer look at anonymous memory and page cache metrics (both active and inactive) for real postgres databases on kubernetes

- homework – set up tests that emulate the diagram below and demonstrate the eviction behavior that i think will happen

I still have a lot of catching up to do. I sketched out the diagram below, but please take this with a large grain of salt – this aspect of kubernetes is complex and linux memory management is complex:

I tried to summarize some thoughts in a comment on the long-running github issue, but this might be wrong – it’s just what I’ve managed to piece together so far.

.

My “user story” is that (1) I’d like higher limit and more memory over-commit for page cache specifically – letting linux use available/unused memory as needed for page cache and (2) I’d like lower request to get scheduling closer to actual anonymous memory needs. I’m running Postgres. In the current state, I have to simultaneously set an artificially low limit on per-pod page cache (to avoid eviction) and artificially high request on per-pod anonymous memory (to avoid OOM by getting oom_score_adj). I’d like individual pods able to burst anonymous memory usage (eg. an unexpected SQL query that hogs memory), if we can steal from page cache of other pods beyond their request – avoiding OOM. The linux kernel can do this; I think it should be possible with the right cgroup settings?

It seems like the new Memory QOS feature might be assigning a static calculated value to memory.high – but for page cache usage, I wonder if we actually want kubernetes to dynamically adjust memory.high eventually as low as request in an attempt to reclaim node-level resources – before evicting end-user pods – when the memory.available eviction signal has exceeded the threshold?

Anyway it’s also worth pointing out that the postgres problems are likely accentuated by higher concentrations of postgres on nodes; if databases are spread across large multi-tenant clusters that likely mitigates things a bit.

Edit 11/29: Alexey Demidov replied on the github issue and pointed out the problem; the linux kernel throttles CPU of processes when we use memory.high so this probably makes my idea above ineffective.

Explaining IPC:SyncRep – Postgres Sync Replication is Not Actually Sync Replication

Postgres database-level “synchronous replication” does not actually mean the replication is synchronous. It’s a bit of a lie really. The replication is actually – always – asynchronous. What it actually means is “when the client issues a COMMIT then pause until we know the transaction is replicated.” In fact the primary writer database doesn’t need to wait for the replicas to catch up UNTIL the client issues a COMMIT …and even then it’s only a single individual connection which waits. This has many interesting properties.

One benefit is throughput and performance. It means that much of the database workload is actually asynchronous – which tends to work pretty well. The replication stream operates in parallel to the primary workload.

But an interesting drawback is that you can get into situations where the primary can speed ahead of the replica quite a bit before that COMMIT statement hits and then the specific client who issued the COMMIT will need to sit and wait for awhile. It also means that bulk operations like pg_repack or VACUUM FULL or REFRESH MATERIALIZED VIEW or COPY do not have anything to throttle them. They will generate WAL basically as fast as it can be written to the local disk. In the mean time, everybody else on the system will see their COMMIT operations start to exhibit dramatic hangs and will see apparent sudden performance drops – while they wait for their commit record to eventually get replicated by a lagging replication stream. It can be non-obvious that this performance degradation is completely unrelated to the queries that appear to be slowing down. This is the infamous IPC:SyncRep wait event.

Another drawback: as the replication stream begins to lag, the amount of disk needed for WAL storage balloons. This makes it challenging to predict the required size of a dedicated volume for WAL. A system might seem to have lots of headroom, and then a pg_repack on a large table might fill the WAL volume without warning.

This is a bit different from storage-level synchronous replication. With storage-level replication, each IO operation performing a write to the disk needs to be replicated. Postgres has a single WAL stream – so if any connection issues a COMMIT then postgres will immediately fsync the entire WAL stream up to that point – including all of the WAL for the bulk operation. In this way, the fsync works a little bit like the IPC:SyncRep wait – however I have a sense that fsync somehow introduces more backpressure into the system as a whole and likely provides at least a small amount of healthy throttling for large bulk operations.

When your workload consists ONLY of small short transactions, Postgres database-level replication can work really well and there’s back-pressure that keeps the database system in equilibrium. This Postgres database won’t lag because each individual transaction pauses. The problem is when you start injecting those big bulk operations with no back-pressure to throttle them.

This is also the reason why autovacuum_vacuum_cost_delay of zero can cause chaos and is a bad idea; it unleashes a vacuum running at full speed and generates massive & bursty amounts of WAL for large busy tables, as fast as it can write to the disk.

If you’re seeing the IPC:SyncRep wait event then one of the first things you should do is analyze your WAL activity. Something along these lines might be useful, if you’re debugging in real time (or add something similar to your monitoring system):

psql --csv -Xtc "create extension pg_walinspect"

psql --csv -Xtc "select now(),pg_current_wal_lsn()" >>wal-data.csv

while true; do

NEXTWAL=$(grep ^2025 wal-data.csv|tail -1|cut -d, -f2)

psql --csv -c "SELECT now(),pg_current_wal_lsn(),*

FROM pg_get_wal_stats('$NEXTWAL', pg_current_wal_lsn())" >>wal-data.csv

echo $(date) - $NEXTWAL

sleep 1

done

One potential idea for fixing this would be to add code into postgres vacuum and refresh materialized view and repack and copy which checks the value of the synchronous_commit parameter and performs periodic pauses according to how it’s set. This is a bit like the idea of doing “batch commits” during large bulk data loads, but we don’t need a real commit – we just need to periodically wait for the remote LSN to catch up, according to the value of synchronous_commit. This would provide a bit more healthy back-pressure to throttle those bulk operations, and might protect the rest of the system from such dramatic negative impact.

It might also be good to come up with some monitoring queries which can make it clear when a single connection is flooding the WAL stream with one bulk operation, versus an aggregate total across many write-heavy connections.

.

Sanitized SQL

A couple times within the past month, I’ve had people send me a message asking if I have any suggestions about where to learn postgres. I like to share the collection of links that I’ve accumulated (and please send me more, if you have good ones!) but another thing I always say is that the public postgres slack is a nice place to see people asking questions (Discord, Telegram and IRC also have thriving Postgres user communities). Trying to answer questions and help people out can be a great way to learn!

Last month there was a brief thread on the public postgres slack about the idea of sanitizing SQL and this has been stuck in my head for awhile.

The topic of sensitive data and SQL is actually pretty nuanced.

First, I think it’s important to directly address the question about how to treat databases schemas – table and column names, function names, etc. We can take our cue from the many industry vendors with data catalog, data lineage and data masking products. Schemas should be internal and confidential to a company – but they are not sensitive in the same way that PII or PCI data is. It’s generally okay to share schema information with vendors (for example, while working together on a support ticket for database performance). Within a company, it’s desirable for most schemas to be discoverable by engineers across multiple development teams – this is worth the benefits of better collaboration and better architecture of internal software.

General Principle: Schema = Source Code

Unfortunately, the versatile SQL language does not cleanly separate things. A SQL statement is a string that can mix keywords and schema and data all together. As Benoit points out in the slack thread – there are prepared (parameterized) statements, but you can easily miss a spot and end up with literal strings in queries. And I would add that most enterprises have the occasional need for manual “data fixes” which often involve simple scripts where literal values are common.

Benoit’s suggestion was to run a full parse of the query text. This is a good idea – in fact PgAnalyze already maintains a standalone open-source library which can be used to directly leverage Postgres’ query parser in many languages. This is really the best solution. However it is worth noting that I’m interested in cases of post-processing query texts from pg_stat_activity and pg_stat_statements, both of which have maximum lengths and will truncate text that’s longer. So query parsing would need to still work with truncated texts that throw syntax errors.

The PgAnalyze library approach is interesting, but I think a simple regex-based approach actually has a lot of merit. This can give very useful sanitized SQL for developers to debug, it has very low risk of exposing sensitive data, and the code is incredibly simple… especially compared with importing the entire postgres parser and trying to link to compiled C libraries in other languages!

Tonight I finally got around to a POC for this.

My design choices here were very intentional:

- I’m stripping out comments because libraries like sqlcommenter will add unique values via comments which break any ability to aggregate and summarize and report top queries or problem queries.

- I would always include the query_id alongside the sanitized SQL text. A user can always go back to the database later and look directly at pg_stat_statements to get the full query text as long as they have the Query ID.

- My decision to include the first three words (excluding comments) and two words following every occurrence of FROM is very strategic. In most cases (CTEs being the exception), the first three words will tell what kind of command is being executed – SELECT or DML or DDL or some utility/misc statement. By including two words after the command, we will generally see the table name for inserts and updates. By including words after FROM, we’ll know at least one of the tables being operated on for queries and deletes. This means we always know at a glance “it’s updating table X” or “it’s querying table Y”.

- When wait events indicate lock contention or increasing IO time, it’s extremely useful to see which tables are being operated on.

- There may be a few cases where this algorithm’s sanitized SQL isn’t as useful as it could be. But that’s why we include the Query ID for retrieving the full query text if needed – and my main goal here is just to have something that’s cheap/easy and helpful most of the time and that we can ensure is safe for developers and operators without requiring PII/PCI data controls.

- The likelihood of this algorithm emitting sensitive data is next-to-zero. We shouldn’t get literals from INSERTs or UPDATEs. Function and procedure calls must always include parentheses, so that’s mitigated with a simple regex to nuke anything after an open-parenthesis.

- If the string ‘FROM’ occurs in a string literal, then we aren’t going to distinguish that from a keyword. This is worth consideration; there is an injection vector here if you can spot it. But I don’t think it’s worthwhile to get fancy and attempt to parse SQL via regex. (As fun as that would be, simplicity/readability/maintainability wins here.) The SQL language is insanely sophisticated and if we’re going to parse then it’s the PgAnalyze way. But in practice, the actual surface area and exposure/leak risk with this regex-based function is very small and likely can be mitigated.

- This does not lessen the importance of good coding practices like parameterized SQL. This is just an additional layer of defense on top of that. Values correctly passed through parameterized SQL will never appear in a query text in the first place.

https://gist.github.com/ardentperf/44e94ac484e53ff8353f6c1dc0b8f272

Here’s what the code looks like:

CREATE OR REPLACE FUNCTION sanitize_sql(sql_text text)

RETURNS text AS $$

DECLARE

cleaned_text text;

first_part_regex_3words text := '([^[:space:]]+)[[:space:]]+([^[:space:]]+)[[:space:]]+([^[:space:]]+)';

first_part_regex_2words text := '([^[:space:]]+)[[:space:]]+([^[:space:]]+)';

first_part_regex_1words text := '([^[:space:]]+)';

first_part text;

match_array text;

from_parts_regex_3words text := '(FROM)[[:space:]]+([^[:space:]]+)[[:space:]]*([^[:space:]]*)';

from_parts text := '';

BEGIN

-- Remove multi-line comments (/* ... */)

cleaned_text := regexp_replace(sql_text, '/\*.*?\*/', '', 'g');

-- Remove single-line comments (-- to end of line)

cleaned_text := regexp_replace(cleaned_text, '--.*?(\n|$)', '', 'g');

-- Extract the first keyword and up to two words after it

first_part := array_to_string(regexp_match(cleaned_text,first_part_regex_3words),' ');

if first_part is null or first_part ILIKE '% FROM %' or first_part ILIKE '% FROM' then

first_part := array_to_string(regexp_match(cleaned_text,first_part_regex_2words),' ');

if first_part is null or first_part ILIKE '% FROM' then

first_part := array_to_string(regexp_match(cleaned_text,first_part_regex_1words),' ');

end if;

end if;

first_part := regexp_replace(first_part, '\(.*','(...)');

-- Find all occurrences of FROM and two words after each

FOR match_array IN

SELECT array_to_string(regexp_matches(cleaned_text,from_parts_regex_3words,'gi'),' ')

LOOP

match_array := regexp_replace(match_array, '\(.*','(...)');

from_parts := from_parts || '...' || match_array;

END LOOP;

-- Return combined result

RETURN first_part || from_parts;

END;

$$ LANGUAGE plpgsql;

postgres=# SELECT sanitize_sql($test$

SELECT pgp_sym_encrypt('123-45-6789', 'my_secret_key') AS encrypted_ssn;

$test$);

sanitize_sql

-----------------------------

SELECT pgp_sym_encrypt(...)

(1 row)

postgres=# SELECT sanitize_sql($test$

-- Fetch active users only

SELECT id, name -- user info

FROM users /* main table */

WHERE active = TRUE; /* status flag */

$test$);

sanitize_sql

------------------------------------

SELECT id, name...FROM users WHERE

(1 row)

postgres=# SELECT sanitize_sql($test$

SELECT id, name

FROM users

WHERE id IN (

/* subquery for high-value customers */

SELECT user_id -- link to users.id

FROM orders

WHERE total > 100 -- filter expensive orders

);

-- end of query

$test$);

sanitize_sql

--------------------------------------------------------

SELECT id, name...FROM users WHERE...FROM orders WHERE

(1 row)

postgres=# SELECT sanitize_sql($test$

-- recent orders per user

WITH recent_orders AS (

SELECT user_id, MAX(created_at) AS last_order

FROM orders

GROUP BY user_id /* aggregation */

)

SELECT u.name, r.last_order

FROM users u

JOIN recent_orders r ON u.id = r.user_id; -- join results

$test$);

sanitize_sql

----------------------------------------------------------

WITH recent_orders AS...FROM orders GROUP...FROM users u

(1 row)

postgres=# SELECT sanitize_sql($test$

INSERT INTO users (name, email, created_at)

VALUES (

'Alice', -- first name

'alice@example.com', /* email */

NOW() /* timestamp */

);

-- new user inserted

$test$);

sanitize_sql

-------------------

INSERT INTO users

(1 row)

postgres=# SELECT sanitize_sql($test$

UPDATE users

SET last_login = NOW() -- set current time

WHERE id = 42 /* target specific user */; -- done

$test$);

sanitize_sql

------------------

UPDATE users SET

(1 row)

postgres=# SELECT sanitize_sql($test$

/*

* Delete old sessions.

* Keep data from the last 30 days.

* Be careful: irreversible.

*/

DELETE FROM sessions

WHERE last_access < NOW() - INTERVAL '30 days';

$test$);

sanitize_sql

------------------------------

DELETE...FROM sessions WHERE

(1 row)

postgres=# SELECT sanitize_sql($test$

-- Upsert settings

INSERT INTO user_settings (user_id, theme, notifications)

VALUES (

1, /* user id */

'dark', -- theme

TRUE -- notifications on

)

ON CONFLICT (user_id)

DO UPDATE

SET theme = EXCLUDED.theme, -- overwrite

notifications = EXCLUDED.notifications;

$test$);

sanitize_sql

---------------------------

INSERT INTO user_settings

(1 row)

postgres=# SELECT sanitize_sql($test$

-- mark inactive users

WITH inactive_users AS (

SELECT id

FROM users

WHERE last_login < NOW() - INTERVAL '1 year' /* cutoff */

)

UPDATE users

SET active = FALSE

WHERE id IN (

SELECT id FROM inactive_users -- CTE reference

);

$test$);

sanitize_sql

--------------------------------------------------------------------

WITH inactive_users AS...FROM users WHERE...FROM inactive_users );

(1 row)

postgres=# SELECT sanitize_sql($test$

-- create table if missing

CREATE TABLE IF NOT EXISTS audit_log ( /* audit records */

id SERIAL PRIMARY KEY, -- identity column

user_id INT REFERENCES users(id), /* FK */

action TEXT NOT NULL, -- what happened

created_at TIMESTAMP DEFAULT NOW() /* timestamp */

);

$test$);

sanitize_sql

-----------------

CREATE TABLE IF

(1 row)

postgres=# SELECT sanitize_sql($test$

/*

This query finds top customers.

It uses multiple CTEs and subqueries.

*/

WITH order_totals AS (

SELECT user_id, SUM(total) AS lifetime_value

FROM orders

GROUP BY user_id -- one row per user

),

top_customers AS (

SELECT user_id

FROM order_totals

WHERE lifetime_value > 10000 /* threshold */

)

SELECT u.id, u.name, o.lifetime_value -- main output

FROM users u

JOIN order_totals o ON u.id = o.user_id

WHERE u.id IN (SELECT user_id FROM top_customers)

ORDER BY o.lifetime_value DESC /* high to low */

LIMIT 10; -- top 10

$test$);

sanitize_sql

---------------------------------------------------------------------------------------------------------------

WITH order_totals AS...FROM orders GROUP...FROM order_totals WHERE...FROM users u...FROM top_customers) ORDER

(1 row)

postgres=# SELECT sanitize_sql($test$

SELECT * FROM generate_series(1,10);

$test$);

sanitize_sql

--------------------------------------

SELECT *...FROM generate_series(...)

(1 row)

postgres=# SELECT sanitize_sql($test$

DO $$

DECLARE

tbl RECORD;

BEGIN

OPEN table_cursor;

LOOP

FETCH table_cursor INTO tbl;

EXIT WHEN NOT FOUND;

EXECUTE 'VACUUM ' || tbl.tablename;

END LOOP;

CLOSE table_cursor;

END $$;

$test$);

sanitize_sql

---------------

DO $$ DECLARE

(1 row)

postgres=# SELECT sanitize_sql($test$

-- Declare a cursor for employees in Engineering

DECLARE emp_cursor CURSOR FOR

SELECT id, name, salary

FROM employees

WHERE department = 'Engineering';

$test$);

sanitize_sql

--------------------------------------------------

DECLARE emp_cursor CURSOR...FROM employees WHERE

(1 row)

postgres=# SELECT sanitize_sql($test$

SELECT

c.name AS customer_name,

o.order_id,

o.order_date,

oi.product_name,

oi.quantity,

'Orders coming from customers are listed below' AS description

FROM customers c, orders o, order_items oi

WHERE c.customer_id = o.customer_id

AND o.order_id = oi.order_id

ORDER BY c.name, o.order_date;

$test$);

sanitize_sql

-----------------------------------------------------------

SELECT c.name AS...from customers are...FROM customers c,

(1 row)

.

Seattle Postgres User Group Video Library

Are you in the Pacific Northwest?

- Thursday Nov 6 at 6pm Bohan Zhang – a member of the technical staff at OpenAI – is returning to speak again at the Seattle Postgres User Group! This time, Bohan will be talking about how OpenAI runs Postgres – challenges faced, lessons learned from outages, and strategies implemented to scale PostgreSQL to handle millions of queries per second (QPS).

- Seattle Systems meetup still needs meetup locations to host us. If you are a company in the Seattle area with space for an in-depth technical talk and 50-100 people, then please reach out to Sitesh (I can help get you in touch).

Since January 2024 we’ve been recording the presentations at Seattle Postgres User Group. After some light editing and an opportunity for the speaker to take a final pass, we post them to YouTube. I’m perpetually behind (I do the editing myself) so you won’t find the videos from this fall yet – but we do have quite a few videos online! Many of these are talks that you can’t find anywhere else. We definitely love out-of-town speakers – but an explicit goal of the user group is also to be an easy place for folks here in Seattle to share what we know with each other, and to be an easy place for people to try out speaking with a small friendly group if they never have before.

https://www.youtube.com/@seattle-postgres

DateSpeakerTitleJune 12 2025Noah BaculiFrom Side Projects: Why We Chose Rust for Postgres + AI (YouTube)May 7 2025Gwen ShapiraRe-engineering Postgres for Millions of Tenants (YouTube)April 10 2025Jonathan KatzVectors: Best practices for a nasty data type (YouTube)March 6 2025Harry PiersonTime Travel Queries with Postgres (YouTube)February 6 2025Rishu BaggaManaging Transaction Metadata in PostgreSQL (YouTube)December 5 2024Kellyn GormanBenchmarking PostgreSQL-Compatible DBs with HammerDB (YouTube)October 10 2024Saraj MunjalDDL Schema Migrations: Navigating the High-Scale Seas (YouTube)September 5 2024Ben ChobotSecure pgBouncer: Break Up With Passwords and Hook Up with AWS Aurora (YouTube)June 6 2024Deon GillSo You Want to Build a Postgres Server (YouTube)May 2 2024Eric LendvaiDatawharf and Wharf Systems (YouTube)April 4 2024Jerry SievertDeveloping Your Own Postgres Database Extensions (YouTube)March 7 2024Bohan ZhangThe Part Of PostgreSQL I Hate The Most: MVCC and How To Optimize It (YouTube)January 18 2024Chelsea DoleIt’s Not You, It’s Me: “Breaking Up” With Massive Tables via Partitioning (YouTube)March 2 2023—Thoughts & Opportunities – Seattle PostgreSQL User Group (intro) (YouTube)By the way – if you’re wondering why our logo is a giant pink elephant, it’s because this is actually a well-known real historical landmark in Seattle!

https://www.meetup.com/seattle-postgres/

A couple people have noticed that we haven’t taken down the old website (which predated the meetup.com site) at https://seapug.org/ … we’ve been talking for several years now about updating this site but we’re all so busy that nothing has happened yet. Regardless, this site is an interesting window into Seattle Postgres User Group meetups all the way back to 2009 (!!)

Testing CloudNativePG Preferred Data Durability

This is the third post about running Jepsen against CloudNativePG. Earlier posts:

- Run Jepsen against CloudNativePG to see sync replication prevent data loss

- Losing Data is Harder Than I Expected

First: shout out to whoever first came up with Oracle Data Guard Protection Modes. Designing it to be explained as a choice between performance, availability and protection was a great idea.

Yesterday’s blog post described how the core of all data safety is copies of the data, and the importance of efficient architectures to meet data safety requirements.

With Postgres, three-node clusters ensure the highest level of availability if one host fails. But two-node clusters are often worth the cost savings in exchange for a few seconds of unavailability during cluster reconfigurations. Similar to Oracle, Postgres two-node clusters can be configured to maximize performance or availability or protection.

Oracle Data Guard modeBehaviorPatroni configurationCloudNativePG configurationMax Performanceoracle defaultAsync; fastest commits; possible data loss on failoverpatroni defaultcnpg defaultMax Availability (NOAFFIRM)Sync when standby available; acknowledge after standby write (not flush); if none available, don’t block

synchronous_mode: truesynchronous_commit: remote_writemethod: anynumber: 1dataDurability: preferredsynchronous_commit: remote_writeMax Availability (AFFIRM)Sync when standby available; acknowledge after standby flush; if none available, don’t blocksynchronous_mode: truemethod: anynumber: 1dataDurability: preferredMax ProtectionAlways sync; if no sync standby, block commits (no data loss)synchronous_mode: truesynchronous_mode_strict: truemethod: anynumber: 1

Automated failovers can involve a small amount of data loss with maximum performance and maximum availability configurations. With Oracle Fast-Start Failover, the FastStartFailoverLagLimit configuration property indicates the maximum amount of data loss that is permissible in order for an automatic failover to occur.

The previous blog post in this series compared CloudNativePG Max Performance and Max Protection modes. Now I want to take a look at Max Availability. In CloudNativePG, the key setting here is spec.postgresql.synchronous.dataDurability. When dataDurability is set to preferred, the required number of synchronous instances adjusts based on the number of available standbys. PostgreSQL will attempt to replicate WAL records to the designated number of synchronous standbys, but write operations will continue even if fewer than the requested number of standbys are available.

All of these experiments were executed on my HP EliteBook (Ryzen Pro 5) with two CNPG Lab VMs via Hyper‑V and the tests ran in a loop for 12–24 hours to aggregate failure rates across the runs.

Experiment 1Using the same test harness as before to indice rapid failures. The test harness waits for all replicas to be READY (per k8s) and then immediately kills the writer.

Hypothesis: in max protection mode we won’t see any data loss, but we will see data loss in max availability mode. Adding a third node to the cluster should reduce the likelihood of data loss.

dataDurabilityinstancesruns showing data lossrequired20% [results]preferred248% [results]preferred34% [results]Findings: Setting dataDurability: preferred in CloudNativePG allows for higher availability but can result in data loss during failover, especially in smaller clusters. I was surprised how much the third node helped.

Hypothesis A: I was seeing a high failure rate specifically because the rapid failures were triggering a failover before CloudNativePG had enough time to restart synchronous replication after the last failure. If there are 60 seconds between each failure, then we shouldn’t see any data loss.

Hypothesis B: CloudNativePG has a failoverDelay setting which can inject a delay before the CNPG reconciliation loop triggers a failover when the primary is unhealthy. If we set this to 60 seconds then we shouldn’t see any data loss.

nb. I also switched to running the latest development build from the trunk of CloudNativePG. (Separately, I had wanted to test some code that was checked in the day before I ran these tests.)

seconds between killsfailoverDelayruns showing data loss0040% [results]6004% [results]0600% [results]Findings: Introducing a delay – either by spacing out failures or by configuring failoverDelay – dramatically reduced or eliminated data loss in preferred mode. When failures occurred back-to-back with no delay, data loss was frequent. However, waiting 60 seconds between failures, or setting a 60-second failoverDelay, allowed CloudNativePG enough time to reestablish synchronous replication, resulting in little or no data loss.

CloudNativePG’s preferred data durability mode offers data safety and high availability with lower-cost two-node clusters by allowing commits to proceed even if the synchronous standby is temporarily unavailable. However, this flexibility comes with a small risk of data loss during failover, especially when failures happen in rapid succession. Introducing delays via the failoverDelay setting minimizes risk. For environments where data durability is paramount, three-node clusters in required mode remain the safest choice, but for those willing to trade a small risk of data loss for improved availability, two-node clusters in preferred mode can be a practical option. Consider setting failoverDelay alongside preferred durability for extra safety.

Data Safety on a Budget

Many experienced DBAs joke that you can boil down the entire job to a single rule of thumb: Don’t lose your data. It’s simple, memorable, and absolutely true – albeit a little oversimplified.

Mark Porter’s Cultural Hint “The Onion of our Requirements” conveys the same idea with a lot more accuracy:

We need to always make sure we prioritize our requirements correctly. In order, we think about Security, Durability, Correctness, Availability, Scalability via Scale-out, Operability, Features, Performance via Scaleup, and Efficiency. What this means is that for each item on the left side, it is more important than the items on the right side.

But this does not tell the whole story. If we’re honest, there is one critical principle of equal importance to everything on this list: Don’t lose all your money.

Every adult who’s managed their own finances knows we don’t have infinite money. Yes we want to keep the data safe. We also want to be smart about spending our money.

Relational databases are one of the most powerful and versatile places to store your data – and they are also one of the most expensive places to store your data. Just look at the per-GB pricing of block storage with provisioned IOPS and low latency, then compare with the pricing of object storage. No contest. Any time a SQL database is beginning to approach the TB range, we definitely should be looking at the largest tables and asking whether significant portions of that data can be moved to cheaper storage – for example parquet files on S3. (Or F3 files?)

Of course, sometimes we need fast powerful SQL and joins and transactions. So relational databases also should run as efficiently as possible. This has direct implications around how we keep the data safe.

From personal photos to enterprise databases, the core of all data safety is copies of the data. Logs and row-store/column-store files (and indexes) are data copies in different formats. You could almost parse the entire database industry through a lense that compares how each technology is just a unique way to replicate data between different formats and places. The revered and time-honored “3-2-1 Backup Rule” is all about copies of the data. From an information theory standpoint, it can be argued that even RAID5 parity, checksums, CRCs, and hashes are a shadow or fingerprint “copy” of the original – even though they aren’t literal full copies of the data.

One of my favorite cultural hints from Mark is: Don’t Let Entropy Win.

In the absence of people making things better, they will get worse. It’s just a fact.

This isn’t Mark’s point, but I think it’s a related concept: at every business that’s successful enough to grow large, there is a natural gravitation toward forming silos of technology. I think of this as a kind of entropy that we need to actively counteract in every large business. Lets look at an example where an enterprise business team building a public API needs a 600GB write-intensive database. Suppose we can buy enterprise grade high-endurance NVMe SSDs (handling write-intensive database workloads) for $1000 each. How much will the storage cost to “keep the data safe” for this public API?

- The business team provisions three environments: one for production and two more for development and testing.

- For business continuity in case of regional problems, the database team creates primary and replica CloudNativePG clusters, so that we are able to run from either of our two regions.

- To maintain high availability, the database team configures CloudNativePG with three instance within each region and they configure preferred anti-affinity so that kubernetes will attempt to schedule the three instances in different buildings or availability zones.

- Persistent storage is provided by the storage team who configures ceph volumes backed by two mirror copies.

- Object storage for backups uses two mirror copies.

- Servers are built by the infrastructure team who configure RAID 1 (mirroring).

In the worst case, we can easily end up spending $96,000 on disks alone – for a database that can fit on a single $1000 enterprise drive! Now that is some crazy storage amplification.

In order to take a smarter approach, lets work backwards from the problems we’re solving. When we say “keep the data safe” – what are some specific situations we want to protect the data from?

- Unavailability during maintenance & deployments at all levels of the stack

- Operational mistakes

- Software bugs at all levels of the stack, from business app to firmware

- Hardware failures of disks

- Hardware failures of servers/compute which can make good disks temporarily inaccessable

- External threats from direct attacks, malware, social engineering, supply chain attacks, etc

- Insider threats arising from situations like personal grievances or personal financial pressures

- Natural disasters (and perhaps political disasters…)

Armed with a list, we can now ask ourselves: what is an economical solution that addresses everything here? There isn’t one right answer but we probably don’t need 12 physical copies of each database per data center. A few ideas:

- Three CNPG instances that use local SSD storage directly (no hardware RAID), for a total of three copies in the primary data center.

- Two or three CNPG instances that use either ceph block storage or local SSD with hardware RAID (but not both) for a total of four or six copies in the primary data center.

- A single CNPG instance in the second data center, with the capability to dynamically add instances on switchovers/failovers.

- Slower, less expensive disks for development databases.

- No CNPG instance for immediate switchover/failover of development databases in second data center.

- Testing tier that matches production config but can be provisioned on demand from backups for load testing, and deprovisioned when unused for some period of time. Development tier also provisioned on demand and deprovisioned when unused for some period of time.

There are many ways to keep data safe on a reasonable budget – these are just a few ideas.

Postgres Replication Links

Our platform team has a regular meeting where we often use ops issues as a springboard to dig into Postgres internals. Great meeting today – we ended up talking about the internal architecture of Postgres replication. Sharing a few high-quality links from our discussion:

Alexander Kukushkin’s conference talk earlier this year, which includes a great explanation of how replication works

- https://posetteconf.com/speakers/alexander-kukushkin/

- https://www.youtube.com/watch?v=PFn9qRGzTMc

- https://www.postgresql.eu/events/pgconfde2025/sessions/session/ 6559-myths-and-truths-about-synchronous-replication-in-postgresql/

- https://www.postgresql.eu/events/pgconfde2025/sessions/session/ 6559/slides/663/Myths%20and%20Truths%20about%20 Synchronous%20Replication%20in%20PostgreSQL.pdf

Alexander’s interview on PostgresTV with Nik Samokhvalov

PostgresFM episode about synchronous_commit

Postgres Documentation for pg_stat_replication system catalog (most important source of replication monitoring data)

CloudNativePG source code that translates pg_stat_replication data into prometheus metrics

- https://github.com/cloudnative-pg/cloudnative-pg/blob/main/config/manager/default-monitoring.yaml#L385

Chapter about streaming replication in Hironobu Suzuki’s book, Internals of PostgreSQL

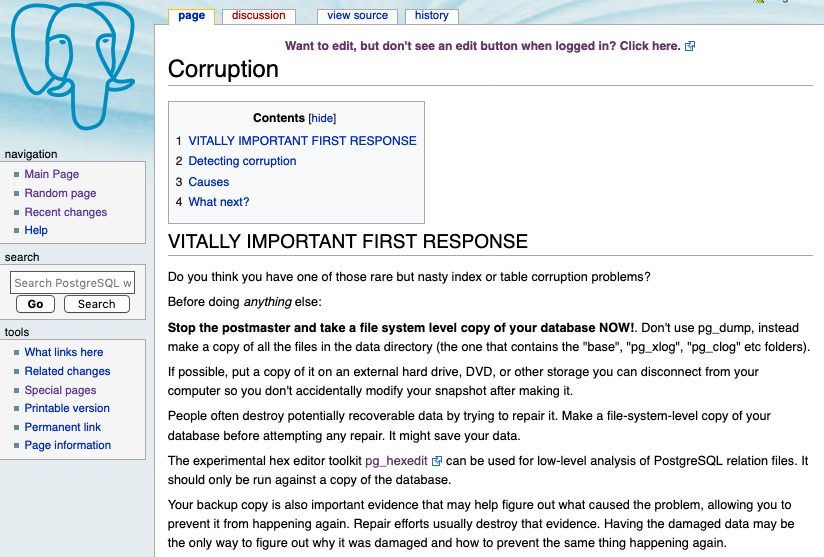

Here is very helpful diagram from Alexander’s slide deck, which we referenced heavily during our discussion.

Can you identify exactly where in this diagram the three lag metrics come from? (write lag, flush lag and replay lag)

Losing Data is Harder Than I Expected

This is a follow‑up to the last article: Run Jepsen against CloudNativePG to see sync replication prevent data loss. In that post, we set up a Jepsen lab to make data loss visible when synchronous replication was disabled — and to show that enabling synchronous replication prevents it under crash‑induced failovers.

Since then, I’ve been trying to make data loss happen more reliably in the “async” configuration so students can observe it on their own hardware and in the cloud. Along the way, I learned that losing data on purpose is trickier than I expected.

Methodology and a Kubernetes caveatTo simulate an abrupt primary crash, the lab uses a forced pod deletion, which is effectively a kill -9 for Postgres:

kubectl delete pod -l role=primary --grace-period=0 --force --wait=false

This mirrors the very first sanity check I used to run on Oracle RAC clusters about 15 years ago: “unplug the server.” It isn’t a perfect simulation, but it’s a simple, repeatable crash model that’s easy to reason about.

I should note that the label role is deprecated by CNPG and will be removed. I originally used it for brevity, but I will update the labs and scripts to use the label cnpg.io/instanceRole instead.

After publishing my original blog post, someone pointed out an important Kubernetes caveat with forced deletions:

Irrespective of whether a force deletion is successful in killing a Pod, it will immediately free up the name from the apiserver. This would let the StatefulSet controller create a replacement Pod with that same identity; this can lead to the duplication of a still-running Pod

https://kubernetes.io/docs/tasks/run-application/force-delete-stateful-set-pod/

This caveat would apply to the CNPG controller just like a StatefulSet controller. In practice, for my tests, this caveat did not undermine the goal of demonstrating that synchronous replication prevents data loss. The lab includes an automation script (Exercise 3) to run the 5‑minute Jepsen test in a loop for many hours and collect results automatically.

Hardware used included an inexpensive HP EliteBook (Ryzen Pro 5, $299 on Amazon) with two CNPG Lab VMs via Hyper‑V, plus multiple cloud instance types. I ran long‑burner loops (8–20 hours) and aggregated failure rates across configurations.

I’m considering bringing Chaos Mesh into the lab in the future, but for now I’m sticking with the explicit crash model above because it’s easy for folks to see exactly what it does.

High‑level results:

- With synchronous replication: 1,061 five‑minute runs, 0 data‑loss failures.

- With asynchronous replication: 1,448 runs, 478 data‑loss failures.

These are the total counts across all runs from three different sets of experiments.

Experiment 1: Checkpoints and replica countHypothesis A: Increase replication traffic (shorter checkpoints which causes more FPWs) to raise odds of “unshipped” WAL at crash ⇒ more losses with async.

Hypothesis B: Fewer replicas (2 instances total instead of 3) might make losses more likely.

Each row below shows the fraction of async runs that showed data loss.

I also ran two of the configurations with sync replication enabled. No data loss was observed in either of the runs with sync replication.

Checkpoint3 instances2 instances5 min (default)5% [async results] / 0% [sync]24% [async results]30 second5% [async results]15% [async results] / 0% [sync]Findings: Hypothesis B was right—2 instances amplified data loss. Hypothesis A was wrong—shorter checkpoints did not increase loss rates here and even correlated with slightly fewer losses.

Experiment 2: Jepsen rate and thread countI varied the transaction rate and the number of client threads. My intuition was that higher rates would increase the chance of a commit landing during a crash window, and that fewer threads might improve per‑thread throughput (given CPU saturation).

Rate50 threads20 threads100024% [cf. experiment 1]8% [results]200051% [results]38% [results]300080% [results]39% [results]4000N/A [results]N/AFindings: Higher rates increased loss frequency (as expected). Reducing thread count lowered CPU pressure and but surprisingly it also reduced loss frequency—even when achieving similar rates. The “4000” rate did not complete successfully; Jepsen analysis stalled and timed out.

The most reliable async configuration for provoking visible loss so far: 2 instances total, rate 3000, 50 threads.

Experiment 3: Hardware differences

Experiment 3: Hardware differences

To ensure reproducibility beyond my laptop, I repeated runs on several cloud instance types.

HardwareasyncsyncAWS m7g26% [results]0% [results]AWS m6g23% [results]0% [results]Azure Dpsv651% [results]0% [results]HP Elitebook (Ryzen 5675U)75% [results]0% [results]I didn’t expect the spread in async failure rates. My current guess is that some combination of CPU and/or IO saturation characteristics change the window for unreplicated commits. The takeaway for teachers and students: if you want to reliably see data loss, Azure Dpsv6 performed best in my runs (about half of iterations saw data loss).

What this means- Synchronous replication remains the guardrail. Across thousands of minutes of testing, I did not observe a single instance of data loss with sync enabled under these test configurations.

- Topology matters. Two instances (one replica) increases the chance of async loss versus three instances.

- Workload shape matters. Higher rates raise loss frequency; fewer client threads can reduce it even at similar throughput.

- Hardware matters. Different CPU/IO profiles change how often you’ll catch an in‑flight commit during a crash.

Use the CloudNativePG LAB and Exercise 3 to run the Jepsen “append” workload and induce rapid primary failures. The looped test and automatic report upload are included. If your goal is to demonstrate loss in async mode, start with:

- 2 instances

- rate 3000

- 50 threads

If Jepsen analysis is stalling and timing out then try reducing the rate to 2000. And if you have the option, try Azure Dpsv6 for the highest chance of observing loss quickly.

Waiting for Postgres 18 – Docker Containers 34% Smaller

On February 25, 2025 Christoph Berg committed the patch:

Subject: [PATCH] Move JIT to new postgresql-18-jit package. (Closes: #927182)

Make LLVM architectures a inclusion list so it works in the Architecture field.

This closed Debian bug 927182 which had been opened in April 2019 by Laurence Parry. That bug had raised concerns over the significant size increase of adding LLVM as a requirement to support Postgres JIT functionality.

Postgres supports packaging LLVM as a separate optional package without needing to recompile database binaries. Postgres is compiled once, and it performs a runtime check whether LLVM libraries are present. It gracefully disables JIT functionality in the database if LLVM libraries are not installed.

From: Andres Freund

Subject: Re: llvm dependency and space concerns

Date: 2025-01-12 00:03:43

Lists: pgsql-hackers

Hi,

On 2025-01-11 13:22:39 -0800, Jeremy Schneider wrote:

> It's a cleaner solution if JIT works more like an extension, and we can

> run a single build and split JIT into a separate package.

It does work like that. Only llvmjit.so has the llvm dependency, the main

postgres binary doesn't link to llvm. If llvmjit.so isn't available, jit is

silently disabled.

Andres

In the official community Postgres RPM/yum repository LLVM was split out to a separate package – but it had never been prioritized in the official community Debian repositories to make these code updates until now.

Christoph noted on the mailing lists that the work is not yet finished – there is still a little more that needs to be done:

From: Christoph Berg

Cc: pgsql-pkg-debian@lists.postgresql.org

Subject: Re: pg18 patch: separate package for llvm/jit

Date: Fri, 28 Feb 2025 16:02:42 +0100

Re: Jeremy Schneider

> I would like to propose this change for Postgres 18.

Hi,

I committed a change to the PG18 packaging that implements that split.

The new package is called postgresql-18-jit.

There is more work to do on each of the extension packages currently

depending on "postgresql-18-jit-llvm (= llvmversion)", that needs to be

converted to "Breaks: postgresql-18-jit-llvm (<< llvmversion)". (This

is also the reason the jit package is not called like that because the

version number there is not the PG version number.)

This will likely happen when extensions are moved to PG18 in

September.

I'm unsure if the split should be backported to PG 17 and earlier

since it will affect production systems in some way.

Christoph

CloudNativePG running on Kubernetes is a fully automated control plane that uses container images and keeps your Postgres databases running reliably, backed up, simple to patch/update and able to recover on their own if something goes wrong. The official container images provided by CloudNativePG directly use the official “PGDG” Postgres community Debian packages on top of the official docker-provided “slim” debian base image. This has the benefit that CNPG users are part of the “herd” using the same binaries which are used by Debian Postgres users globally; we’re not going to hit any corner cases from compilation flags or configure options that aren’t widely used. Another commonly used container is the Docker “official image” that you get when you type docker run postgres – these images also directly use the official Postgres community Debian packages by default.

Generally speaking, the size of docker containers can vary widely. Minimizing dependencies and reducing container size has many benefits: less stuff to patch and update over time, fewer things that can get CVEs, fewer unnecessary utilities that can be leveraged by attackers, faster build times, less disk space usage, reduced network consumption and faster startup times in environments where containers are often migrated and rescheduled across fleets (moving to new hardware where those containers had never previously run, and thus images need to be downloaded).

Let’s take a look at the sizes of common Postgres containers:

# docker images

REPOSITORY TAG SIZE

ghcr.io/cloudnative-pg/postgresql 17.4-standard-bookworm 641MB

ghcr.io/cloudnative-pg/postgresql 17.4-minimal-bookworm 413MB

postgres 17.4-bookworm 438MB

postgres 17.4-alpine3.21 278MB

Docker provides an official Postgres image based on Alpine (a Linux distribution designed to be small, simple and secure). Postgres is built from source for the Alpine container. Alpine uses the musl C library which doesn’t have the same adoption level in the general public and test coverage in the postgres community build farm as the GNU C library. Nonetheless, we see above that Docker’s official Alpine postgres image is 36% smaller than Docker’s official Debian Bookworm image. (From 438MB down to 278MB.)

Interestingly, LLVM alone seems responsible for half of the Alpine image’s size!

# docker run -it postgres:17.4-alpine3.21 apk info llvm19-libs

WARNING: opening from cache https://dl-cdn.alpinelinux.org/alpine/v3.21/main: No such file or directory

WARNING: opening from cache https://dl-cdn.alpinelinux.org/alpine/v3.21/community: No such file or directory

llvm19-libs-19.1.4-r0 description:

LLVM 19 runtime library

llvm19-libs-19.1.4-r0 webpage:

https://llvm.org/

llvm19-libs-19.1.4-r0 installed size:

153 MiB

The Alpine build might be useful for use cases where every byte matters – but it’s lacking in one of Postgres greatest strengths: extensibility. Another significant advantage of leveraging the community Debian packages is that we get free access to all of the Postgres Extensions that are already packaged for Debian. Adding a supported extension to a container is a one-liner change to the Dockerfile!

CloudNativePG builds and provides their own images on the Github Container Registry. At present there are two flavors of CNPG images: “minimal” and “standard”.

The CNPG minimal image is 413MB – about the same size as the docker image without any extensions or extras.

The CNPG standard image 641MB and includes three default extensions (pgvector, pgaudit and pg-failover-slots) as well as all Debian-supported glibc locales. The locales are responsible for 99% of the size increase.

# docker run -it ghcr.io/cloudnative-pg/postgresql:17.4-standard-bookworm dpkg-query --show --showformat='${Package}\t${Installed-Size} KB\n' locales-all postgresql-17-pgvector postgresql-17-pgaudit postgresql-17-pg-failover-slots

locales-all 227366 KB

postgresql-17-pg-failover-slots 101 KB

postgresql-17-pgaudit 106 KB

postgresql-17-pgvector 767 KB

Most users should not need these Debian glibc locales, especially since these images include ICU (which interestingly is much smaller than the Debian locales-all package). Most of us should be starting with the minimal image and customizing it with only the extensions we need.

But what about LLVM? Christoph’s commit should make JIT an optional install instead of a required install; how will this impact the minimal image size?

It took me a little work to get the syntax right, but it turns out this wasn’t too hard to test by tweaking the CNPG Dockerfile to use the official postgres community pg18 debian package snapshots.

https://github.com/ardentperf/postgres-containers/commit/6388113b36824e3a88f47faabf0ddd386b96e41a

Author: Jeremy Schneider <schneider@ardentperf.com>

Date: Sun Apr 6 21:58:45 2025 -0700

test pg18 development snapshots https://wiki.postgresql.org/wiki/Apt/FAQ#Development_snapshots

diff --git a/Dockerfile b/Dockerfile

index d4f02b7..8a03349 100644

--- a/Dockerfile

+++ b/Dockerfile

@@ -8,7 +8,12 @@ ENV PATH=$PATH:/usr/lib/postgresql/$PG_MAJOR/bin

RUN apt-get update && \

apt-get install -y --no-install-recommends postgresql-common ca-certificates gnupg && \

- /usr/share/postgresql-common/pgdg/apt.postgresql.org.sh -y && \

+ true

+

+COPY pg18snapshot.pref /etc/apt/preferences.d/

+COPY pg18snapshot.list /etc/apt/sources.list.d/

+

+RUN apt-get update && \

apt-get install -y --no-install-recommends -o Dpkg::::="--force-confdef" -o Dpkg::::="--force-confold" postgresql-common && \

sed -ri 's/#(create_main_cluster) .*$/\1 = false/' /etc/postgresql-common/createcluster.conf && \

apt-get install -y --no-install-recommends \

diff --git a/pg18snapshot.list b/pg18snapshot.list

+deb [signed-by=/usr/share/postgresql-common/pgdg/apt.postgresql.org.asc] http://apt.postgresql.org/pub/repos/apt/ bookworm-pgdg-snapshot main 18

diff --git a/pg18snapshot.pref b/pg18snapshot.pref

+Package: *

+Pin: origin apt.postgresql.org

+Pin-Priority: 1001

First I built version 17.4 locally to confirm that I would get the same size container as what’s in the official registry. Then I used the modified Dockerfile to build version 18.

# git checkout main

Switched to branch 'main'

Your branch is up to date with 'origin/main'.

# docker build . --target minimal --tag minimal17 --build-arg PG_VERSION=17.4

[+] Building 0.1s (7/7) FINISHED docker:default

=> [internal] load build definition from Dockerfile 0.0s

=> => transferring dockerfile: 1.35kB 0.0s

=> [internal] load metadata for docker.io/library/debian:bookworm-slim 0.0s

=> [internal] load .dockerignore 0.0s

=> => transferring context: 2B 0.0s

=> [minimal 1/3] FROM docker.io/library/debian:bookworm-slim 0.0s

=> CACHED [minimal 2/3] RUN apt-get update && apt-get install -y --no-install-recommends postgresql-common ca-certificate 0.0s

=> CACHED [minimal 3/3] RUN usermod -u 26 postgres 0.0s

=> exporting to image 0.0s

=> => exporting layers 0.0s

=> => writing image sha256:d2d9baf4d2384dbfe58cd14bd0adc5aaca2beff95a4a7e37712ddc26f14a3a7d 0.0s

=> => naming to docker.io/library/minimal17 0.0s

# git checkout test18

Switched to branch 'test18'

Your branch is up to date with 'ardent/test18'.

# docker build . --target minimal --tag minimal18 --build-arg PG_VERSION=18

[+] Building 0.1s (11/11) FINISHED docker:default

=> [internal] load build definition from Dockerfile 0.0s

=> => transferring dockerfile: 1.42kB 0.0s

=> [internal] load metadata for docker.io/library/debian:bookworm-slim 0.0s

=> [internal] load .dockerignore 0.0s

=> => transferring context: 2B 0.0s

=> [minimal 1/6] FROM docker.io/library/debian:bookworm-slim 0.0s

=> [internal] load build context 0.0s

=> => transferring context: 298B 0.0s

=> CACHED [minimal 2/6] RUN apt-get update && apt-get install -y --no-install-recommends postgresql-common ca-certificate 0.0s

=> CACHED [minimal 3/6] COPY pg18snapshot.pref /etc/apt/preferences.d/ 0.0s

=> CACHED [minimal 4/6] COPY pg18snapshot.list /etc/apt/sources.list.d/ 0.0s

=> CACHED [minimal 5/6] RUN apt-get update && apt-get install -y --no-install-recommends -o Dpkg::::="--force-confdef" -o 0.0s

=> CACHED [minimal 6/6] RUN usermod -u 26 postgres 0.0s

=> exporting to image 0.0s

=> => exporting layers 0.0s

=> => writing image sha256:c2471c9180750c1983177693ef21b609443c4b5aa89f9f5ffe9cb8e159ebe00d 0.0s

=> => naming to docker.io/library/minimal18 0.0s

# docker images

REPOSITORY TAG SIZE

minimal18 latest 262MB

minimal17 latest 413MB

ghcr.io/cloudnative-pg/postgresql 17.4-standard-bookworm 641MB

ghcr.io/cloudnative-pg/postgresql 17.4-minimal-bookworm 413MB

postgres 17.4-bookworm 438MB

postgres 17.4-alpine3.21 278MB

The minimal CNPG image for Postgres 18 is 262MB – that’s 34% smaller than the minimal CNPG image for Postgres 17 of 413MB.

This is great news – another reason to look forward to Postgres 18!

Testing loadBalancerSourceRanges with CloudNativePG on Azure Kubernetes

This option didn’t seem super widely documented from my initial searches online; it should be able to basically enforce layer 4 ingress/firewall rules at the individual service level. This is a quick test to check if it works.

Steps were generated with ChatGPT, and mostly worked. It missed Azure provider registration, but I figure that out easily from the Azure error message. GPT was creating the VMs after the CNPG cluster … I had to reverse that so I’d know the IP for loadBalancerSourceRanges. I had to switch the VMs to the “westus” region because of quota limits. There were a couple more tweaks but overall I got this done in an hour or two – couldn’t have done that with just google.

I used an Azure free account; never had to set up a credit card with Azure. Note that free accounts are limited to 30 days and only available for new users. This blog post happened to be the first time I’d used azure with my personal account so it qualified. I’ll need to use a pay-as-you-go account in the future.

Step 1: Create an AKS ClusterCreate resource group

az group create --name myResourceGroup --location eastus

{

"id": "/subscriptions/7460cc93-ed07-42e7-a246-3b87e52a3ad7/resourceGroups/myResourceGroup",

"location": "eastus",

"managedBy": null,

"name": "myResourceGroup",

"properties": {

"provisioningState": "Succeeded"

},

"tags": null,

"type": "Microsoft.Resources/resourceGroups"

}

Register the provider

az provider register --namespace Microsoft.ContainerService

Registering is still on-going. You can monitor using 'az provider show -n Microsoft.ContainerService'

Create AKS cluster

az aks create \

--resource-group myResourceGroup \

--name myAKSCluster \

--network-plugin kubenet \

--network-policy calico \

--load-balancer-sku standard \

--node-count 2 \

--enable-managed-identity \

--generate-ssh-keys

{

"aadProfile": null,

"addonProfiles": null,

"agentPoolProfiles": [

{

"availabilityZones": null,

"capacityReservationGroupId": null,

"count": 2,

...

"upgradeSettings": null,

"windowsProfile": null,

"workloadAutoScalerProfile": {

"keda": null,

"verticalPodAutoscaler": null

}

}

get cluster credentials

az aks get-credentials --resource-group myResourceGroup --name myAKSCluster

Merged "myAKSCluster" as current context in /home/jeremy/code/cnpg-playground/k8s/kube-config.yaml

Installing CNPG with helm

helm repo add cnpg https://cloudnative-pg.github.io/charts

helm repo update

helm install cnpg cnpg/cloudnative-pg --namespace cnpg-system --create-namespace

NAME: cnpg

LAST DEPLOYED: Fri Mar 14 00:01:16 2025

NAMESPACE: cnpg-system

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

CloudNativePG operator should be installed in namespace "cnpg-system".

You can now create a PostgreSQL cluster with 3 nodes as follows:

cat <<EOF | kubectl apply -f -

# Example of PostgreSQL cluster

apiVersion: postgresql.cnpg.io/v1

kind: Cluster

metadata:

name: cluster-example

spec:

instances: 3

storage:

size: 1Gi

EOF

kubectl get -A cluster

Verifying install

kubectl get pods -n cnpg-system

NAME READY STATUS RESTARTS AGE

cnpg-cloudnative-pg-847b949f48-d4clp 1/1 Running 0 118s

Create Ubuntu client VMs

az vm create \

--resource-group myResourceGroup \

--name VM1 \

--location westus \

--image Ubuntu2404 \

--admin-username azureuser \

--generate-ssh-keys \

--public-ip-address VM1PublicIP

{

"fqdns": "",

"id": "/subscriptions/7460cc93-ed07-42e7-a246-3b87e52a3ad7/resourceGroups/myResourceGroup/providers/Microsoft.Compute/virtualMachines/VM1",

"location": "westus",

"macAddress": "60-45-BD-09-BD-FA",

"powerState": "VM running",

"privateIpAddress": "10.0.0.4",

"publicIpAddress": "104.42.23.20",

"resourceGroup": "myResourceGroup",

"zones": ""

}

az vm create \

--resource-group myResourceGroup \

--name VM2 \

--location westus \

--image Ubuntu2404 \

--admin-username azureuser \

--generate-ssh-keys \

--public-ip-address VM2PublicIP

{

"fqdns": "",